Chapter 12: AI Scientist in Practice — AI/Robotics, Bio/Chem/Drug, Autoresearch

12.1 Two Existence Proofs, One Week Apart

In April 2026, two papers appeared within a week of each other.

Anthropic's AAR (Autonomous Alignment Research) [Anthropic, 2026]: 9 Claude Opus 4.6 instances ran alignment research autonomously over 5 days. Result: PGR (Progress Rate) 0.97 vs human baseline 0.23. Cost: approximately $22/hour. 9 agents working independently, integrating results, generating new hypotheses.

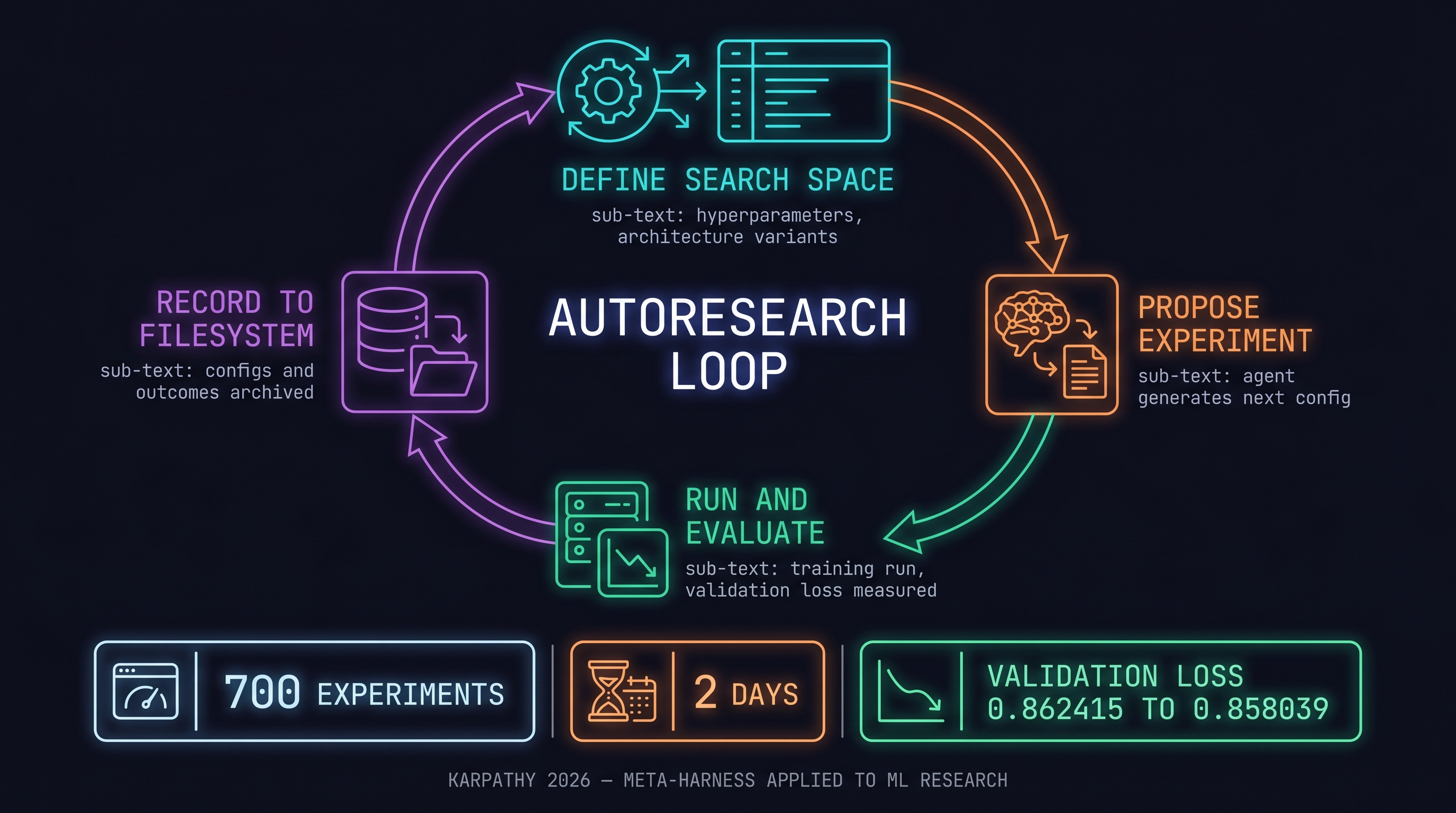

Karpathy's autoresearch [Karpathy, 2026]: 700 experiments optimizing GPT-2 training in 2 days. Validation loss 0.862415 → 0.858039 (-0.4%). An exploration that would take humans months, completed in two days by agents.

In one week, autonomous agents produced production-grade results in both alignment research (Anthropic) and ML research (Karpathy). This is the evidence that the AI Scientist is not "future talk" but "happening now."

12.2 Three Stages of Research Democratization

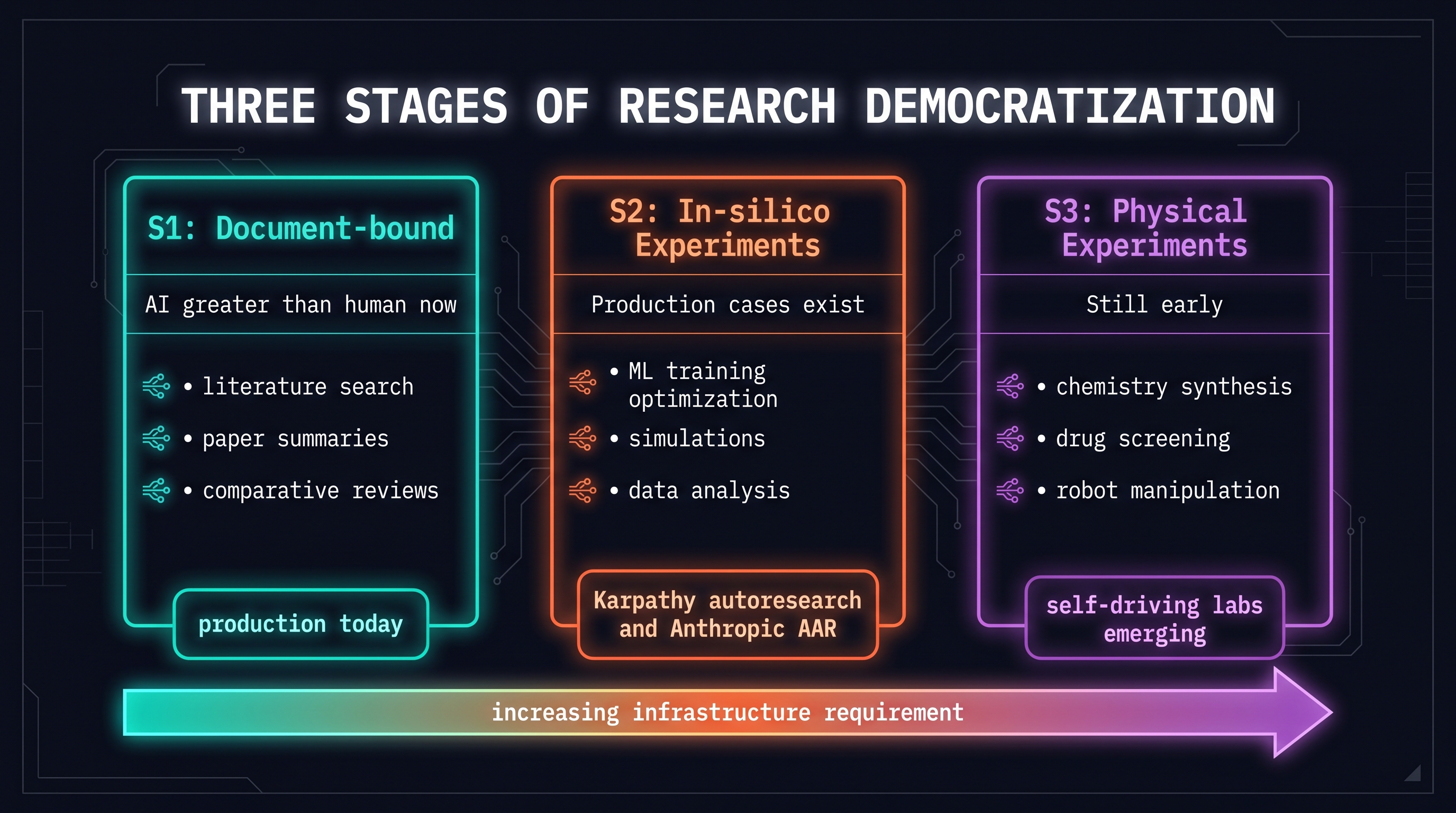

Post [Um, 2026] classifies AI-driven research into three stages:

S1: Document-bound Research

Already AI > human. Literature search, paper summarization, comparative analysis, review writing. Doable right now with Claude Code + LLM Wiki.

S2: In-silico Experiments

Experiments that complete inside a computer. ML training, simulation, data analysis. Karpathy's autoresearch and Anthropic's AAR are production examples of this stage.

S3: Physical Experiments

Requires real labs, real chemicals, real robots. Still early, but the direction is clear.

12.3 ML / Alignment Research — Autonomous Agents

Karpathy's Autoresearch

The design of [Karpathy, 2026] [Karpathy, 2026]:

- Define the search space: GPT-2 training hyperparameter combinations (learning rate, batch size, architecture variants)

- Agent loop: propose → run → evaluate → propose next

- Record results: store all experiment configs and outcomes in the filesystem

- Meta-harness pattern: Chapter 9's meta-harness is exactly this loop

[Karpathy, 2026] trained a small GPT-4-sized model (nanochat) the same way in one day. Demonstrates the pattern's scalability.

Anthropic's AAR

Key finding from [Anthropic, 2026]: 9 agents working independently was more effective than a single long-running agent. "Diversity prevented premature convergence." PGR 0.97 is more than 4x the 0.23 achieved by human experts on the same task.

Common implication of both cases: agent loop + filesystem-based experience accumulation + meta-harness pattern is the core structure of research automation.

12.4 Medical AI — Clinical Task Benchmarks

Wu et al. 2026 (arXiv 2603.28589) [Um, 2026]'s Med-AI Bench:

- 19 clinical tasks

- 171 cases

- Scope: diagnosis, treatment planning, medical report generation, clinical reasoning

Results: AI exceeded human baselines on multiple clinical tasks. The gap was largest in documentation-heavy tasks (report generation, coding).

Note: This benchmark demonstrates AI's potential in clinical settings, but actual clinical deployment requires separate regulatory, ethical, and validation processes. AI Scientist can support clinical research; that is a different question from replacing clinical judgment.

12.5 AI Co-Scientist — Google's Multi-Agent Research System

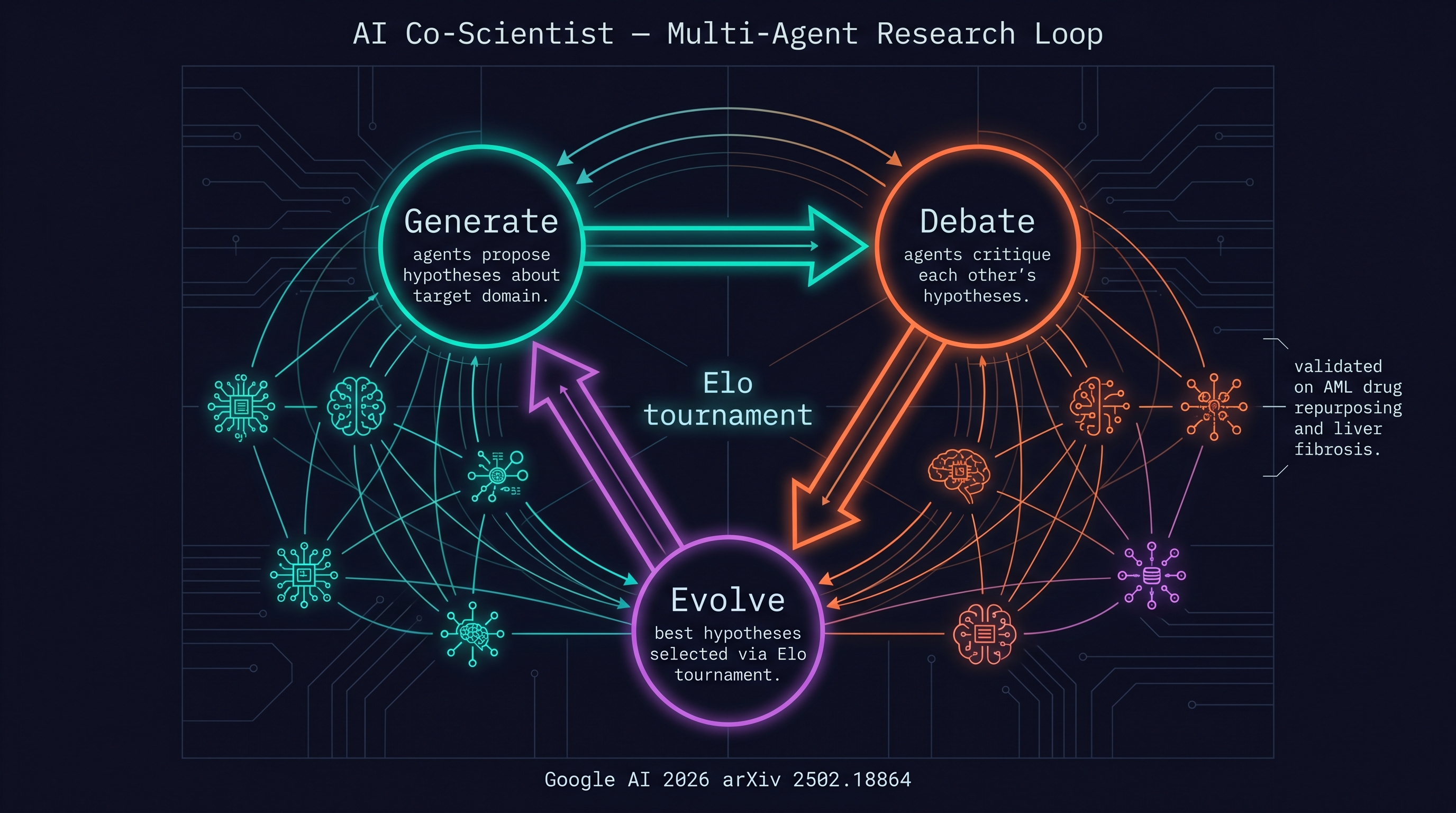

Google's AI Co-scientist (arXiv 2502.18864) [Um, 2026]:

- Architecture: Multi-agent system on Gemini 2.0

- Loop: Generate → Debate → Evolve + Elo tournament

- Validated domains: AML (Acute Myeloid Leukemia) drug repurposing, liver fibrosis

Key innovation: agents debate and critique each other's hypotheses. An Elo tournament automatically selects the strongest hypotheses. New hypotheses are generated and validated without human expert feedback.

This is Chapter 9's dependency graph + multi-agent verification + meta-harness applied to the research domain.

12.6 Self-Driving Labs — Chemistry / Biology

From [Um, 2026] (summarized from Rachel Brazil's Nature feature):

- Labs that autonomously perform chemical synthesis and screening

- Humans design experiments; robots execute; AI analyzes results and proposes next experiments

- Current applications: materials discovery, drug candidate exploration

This is the S3 stage of research democratization. S1 (document) and S2 (in-silico) are possible right now; S3 requires additional physical infrastructure and safety validation.

12.7 Scope Honesty — The Robotics Gap

This chapter's title includes "AI/Robotics," but the current corpus doesn't have sufficient AI Scientist examples specific to robotics. To be clear:

- Covered: ML/alignment research (autoresearch, AAR), medical AI (Med-AI Bench), chemistry/biology (self-driving labs), AI Co-scientist (Google)

- Not covered: Robotics-specific AI Scientist — this domain is in preparation (Part V: Robotics is forthcoming)

The AI Scientist in robotics involves a much more complex physical experimentation loop. Simulation enables S2, but real hardware experiments require separate safety validation frameworks. This book can be honest only up to this point.

12.8 Closing the Loop

Chapter 1 started with "knowledge externalization." The real cost of Claude→Codex migration isn't code changes — it's extracting knowledge locked inside model conversations into plain-text files like AGENTS.md, HANDOFF.md, TASKS.md.

Chapter 10's LLM Wiki extended that externalization to research knowledge. Chapter 11 turned daily activity into AI external memory. And this chapter shows the point where that external memory becomes the research loop itself.

Karpathy's trajectory shows this most clearly:

- LLM Wiki (Chapter 10): "Put everything in markdown"

- Autoresearch (Chapter 12): "Agents run experiments"

- Same author, same scaffolding, one year apart

From external memory to autonomous research — that is the final destination of harness engineering.

References

- Anthropic, "Autonomous Alignment Research (AAR)," 2026-04-14. [Anthropic, 2026]

- Karpathy, Andrej, "Autoresearch," 2026. [Karpathy, 2026]

- Karpathy, Andrej, "Autoresearch — Round 1 tweet," 2026. [Karpathy, 2026]

- Karpathy, Andrej, "NanoChat," 2026. [Karpathy, 2026]

- terryum, "AAR post," terryum-ai, 2026. [Um, 2026]

- terryum, "Autoresearch post," terryum-ai, 2026. [Um, 2026]

- terryum, "AI Co-scientist post," terryum-ai, 2026. [Um, 2026]

- Wu et al., "Med-AI Bench," arXiv 2603.28589, 2026. [Um, 2026]

- terryum, "Self-driving labs post," terryum-ai, 2026. [Um, 2026]

- terryum, "Research democratization post," terryum-ai, 2026. [Um, 2026]

- Google AI, "AI Co-scientist," arXiv 2502.18864, 2026. (primary source for [Um, 2026])