Chapter 11: My Activity as AI's External Memory — Bidirectional Flow with Obsidian × LLM

11.1 What Does Research Mean in the AI Age?

Post #7 "Brain Augmentation" [Um, 2026] opens with: "In the age of AI, what matters in research is not 'knowing more myself' but 'building an environment where an AI scientist can sustain self-improving knowledge creation.'"

That's the starting point for this chapter. Building an external memory system isn't a productivity tool. It's building an environment for doing research with AI.

What that environment actually looks like is what this chapter covers.

11.2 Vault Structure — In Practice

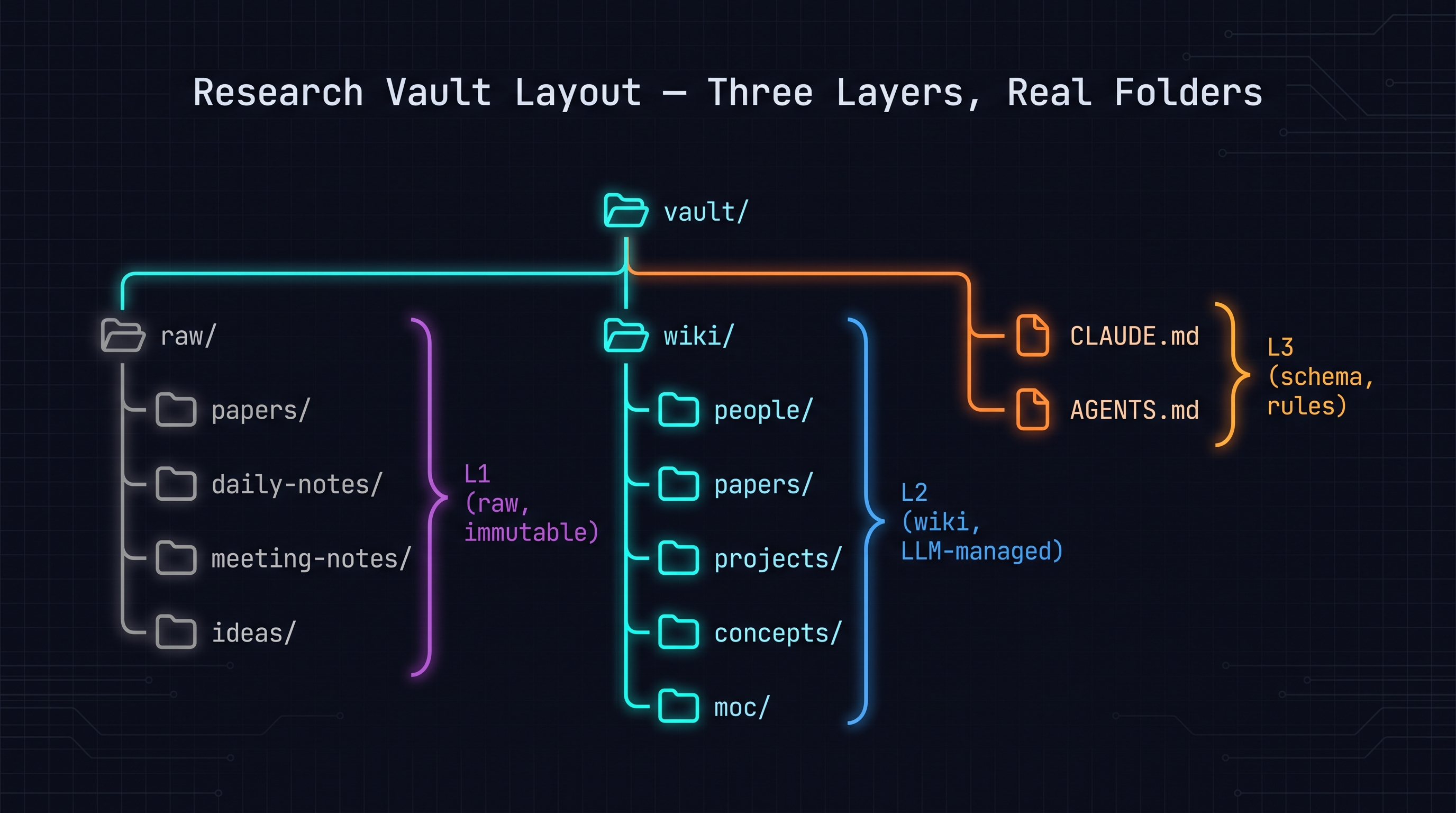

Turn Chapter 10's three layers into a real folder structure:

vault/

├── raw/ # L1: original sources

│ ├── papers/ # paper PDFs, arXiv links

│ ├── daily-notes/ # daily notes

│ ├── meeting-notes/ # meeting records

│ └── ideas/ # idea clippings

│

├── wiki/ # L2: LLM-managed knowledge base

│ ├── people/ # researchers, colleagues

│ ├── papers/ # paper summaries + annotations

│ ├── projects/ # project status pages

│ ├── concepts/ # technical concepts

│ └── moc/ # Maps of Contents

│

├── CLAUDE.md # L3: vault structure (for Claude)

└── AGENTS.md # L3: vault structure (for Codex)

CLAUDE.md example (L3):

# My Research Vault

This vault contains my research knowledge organized in three layers.

## Structure

- raw/papers/: Paper PDFs and arXiv links. READ ONLY.

- raw/daily-notes/: Daily notes. READ ONLY.

- wiki/papers/: Paper summaries with my annotations. YOU CAN EDIT.

- wiki/concepts/: Technical concept pages. YOU CAN EDIT.

- wiki/moc/: Map of Contents pages. YOU CAN EDIT.

## Operations

When I say "ingest [paper]": read raw/papers/[paper], create/update wiki/papers/[paper].md

When I say "what do I know about [X]": search wiki/ for relevant pages and summarize.

When I ask a research question: search wiki/ AND raw/, synthesize an answer.

11.3 Backlinks and Tags — The LLM's Index

Obsidian's backlinks ([[link]]) and tags (#tag) express relationships between markdown files. For the LLM, this is its index [Okhlopkov, 2026].

Backlink usage:

# Soft Robotic Hand Papers

Related: [[Gelsight Sensor]] [[Tactile Sensing]] [[Dexterous Manipulation]]

## Key Papers

- [[Tactile Sensing Survey]] — Tactile Sensing Overview...

- [[Dexterous Manipulation Paper]] — Dexterous Grasping with Force Feedback...

When the LLM is asked "list papers related to soft robotic hand," it follows backlinks from [[Soft Robotic Hand Papers]] to find related pages.

Tag usage: Build a consistent tag system and the LLM uses it as filter criteria. Examples: #reviewed, #keystone, #todo-summarize.

11.4 How to Ask the LLM

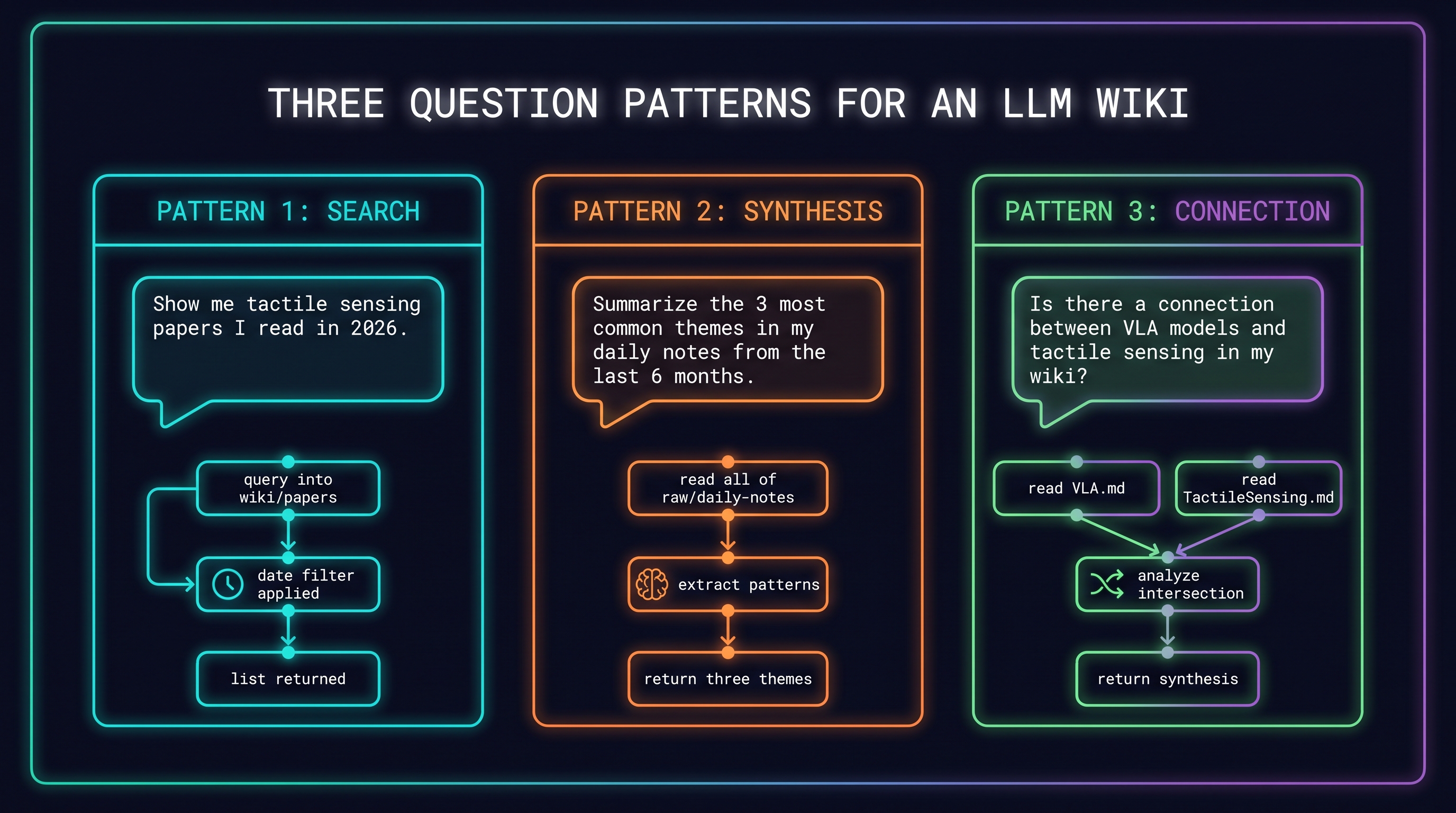

Three effective question patterns [Karpathy, 2026]:

Pattern 1: Search

"Show me the list of tactile sensing papers I read in 2026"

→ Search wiki/papers/, apply date filter

Pattern 2: Synthesis

"Summarize the 3 most common themes in my daily notes from the last 6 months"

→ Read all of raw/daily-notes/, extract patterns

Pattern 3: Connection

"Is there a connection between VLA models and tactile sensing in my wiki?"

→ Read wiki/concepts/VLA.md + wiki/concepts/TactileSensing.md, analyze intersections

11.5 The Counter-Argument: You Still Have to Write Yourself

Post [Um, 2026] offers a counterbalance: "If you delegate everything to AI, your thinking doesn't get externalized — it evaporates. Writing by hand is a verification of understanding. It's not the same as reading an LLM summary."

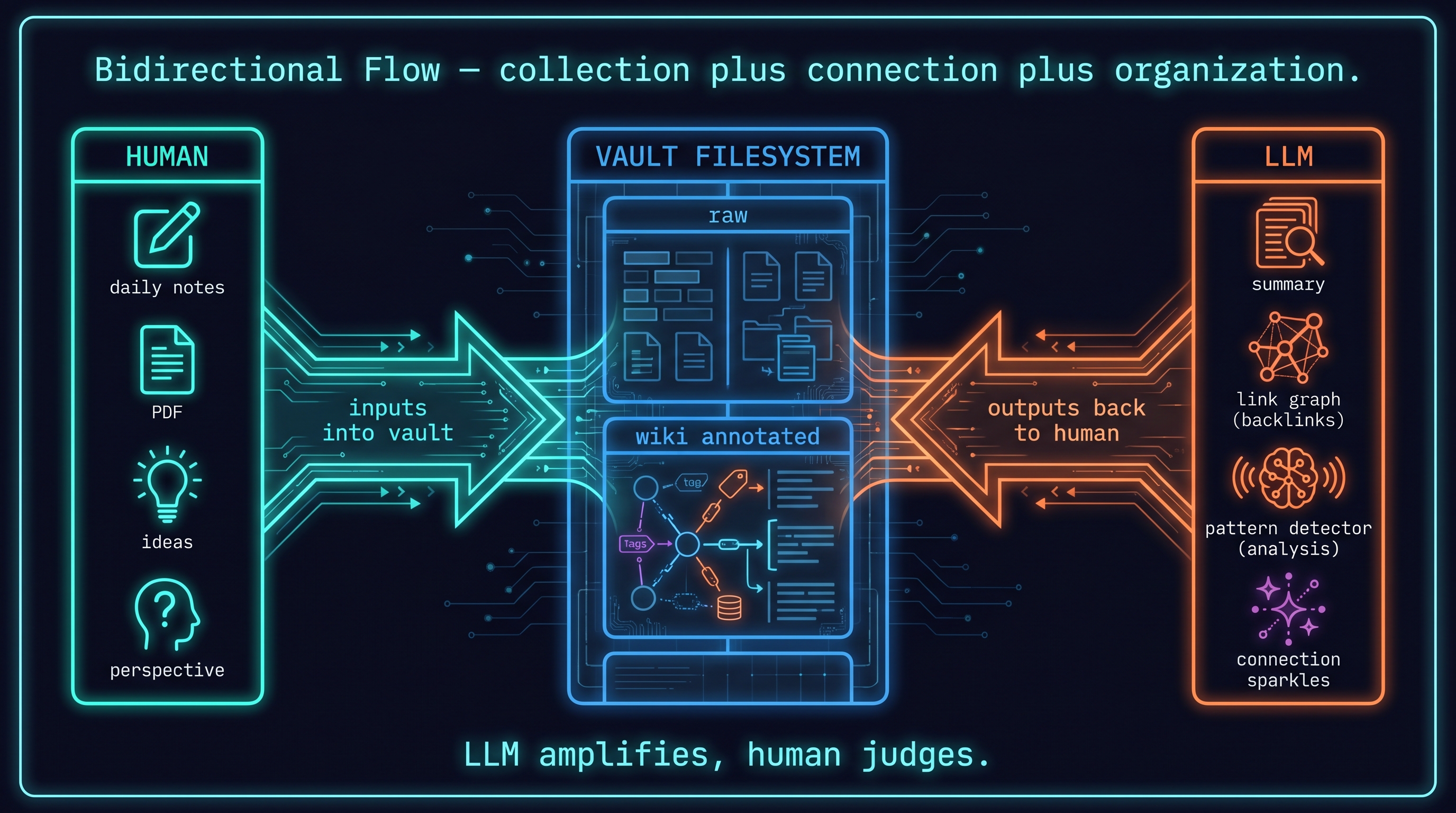

This is valid. The goal of an external memory system is not to replace your thinking but to amplify it. Finding the balance:

- LLM handles collection, connection, organization

- Human provides judgment, interpretation, new perspective

- Maintain the habit of writing your own thoughts in daily notes

From [Maker, 2026]'s 4-month usage report: "The LLM finds connections. Whether those connections are meaningful is still up to me."

11.6 Bidirectional Flow

The key to an external memory system is bidirectional flow:

Human → LLM (input):

- Write daily notes (

raw/daily-notes/) - Add paper PDFs (

raw/papers/) - Clip ideas (

raw/ideas/) - When asking, state your perspective explicitly ("My research direction is X; in that context, I want to know about Y")

LLM → Human (output):

- Summaries and synthesis (

wiki/papers/updates) - Discovering related papers (backlink traversal)

- Identifying patterns (daily notes analysis)

- Proposing new connections

The framing from [Um, 2026]: "AI is leverage. Leverage amplifies your capacity — but without a foundation, there is nothing to amplify."

Post #7's conclusion [Um, 2026]: "This is not just a homepage. It is a space where AI and I think together, and farther ahead, a research environment for an AI scientist that may itself generate the next layer of knowledge."

References

- terryum, "Brain Augmentation," post #7, 2026-03-10. [Um, 2026]

- terryum, "New Leverage," terryum-ai, 2026. [Um, 2026]

- terryum, "The value of writing yourself," terryum-ai, 2026. [Um, 2026]

- Karpathy, Andrej, "LLM Wiki," gist, 2026. [Karpathy, 2026]

- Aimaker, "AI-powered second brain — 4-month report," 2026. [Maker, 2026]

- Starmorph, "LLM Wiki guide," 2026. [Starmorph, 2026]

- Okhlopkov, "Claude Code 4-month setup retrospective," 2026. [Okhlopkov, 2026]

- Bsukistory, "AI automation perspective (Korean)," 2026. [Brunch, 2026]

- Anthropic, "Claude memory documentation," 2026. [Anthropic, 2026]