Chapter 10: Rise of the LLM Wiki — Karpathy's Obsidian + Claude Code Workflow

10.1 A Gist with 16 Million Views

In early 2026, Andrej Karpathy published a gist [Karpathy, 2026]. Title: "LLM Wiki." The content was simple: organize an Obsidian vault into three layers, use Claude Code to index knowledge, ask it questions. The tweet introducing it [Karpathy, 2026] reached 16M+ views [Karpathy, 2026].

Why such explosive reach? The pattern was simple and powerful. "Put everything in markdown, point the LLM at it, ask questions." That's it. But this simple pattern converts a one-shot chat tool into a compounding personal knowledge engine [host), 2026]. What the LLM knows today is a starting point; tomorrow it knows more; next month it knows the connections.

10.2 The Three Layers

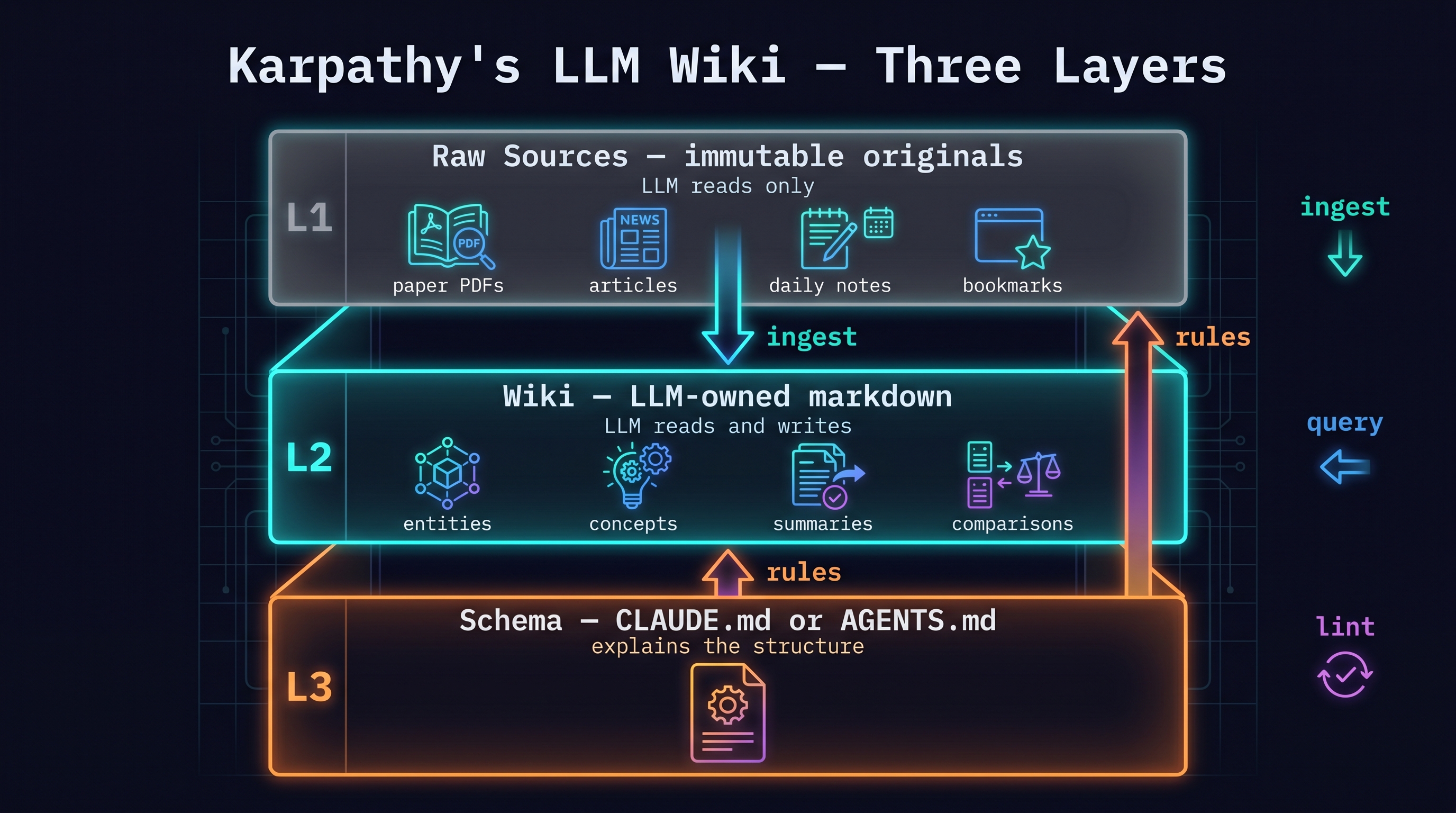

Karpathy's LLM Wiki uses three layers [Karpathy, 2026]:

L1: Raw Sources — immutable originals

vault/

└── raw/

├── papers/ # PDFs, arXiv, papers

├── articles/ # blogs, news

├── notes/ # meeting notes, ideas

└── bookmarks/ # bookmarks, clippings

The LLM reads this layer only. Never writes to it. Immutability is guaranteed.

L2: Wiki — LLM-owned markdown

vault/

└── wiki/

├── entities/ # people, projects, companies

├── concepts/ # technical concepts, terms

├── summaries/ # paper and article summaries

└── comparisons/ # comparison and contrast pages

The LLM reads L1 and writes/updates this layer. Entity pages, concept pages, comparison pages, and cross-references.

L3: Schema — CLAUDE.md or AGENTS.md

The file explaining the vault structure to the LLM. "Entity pages live in wiki/entities/", "Concept pages live in wiki/concepts/", etc.

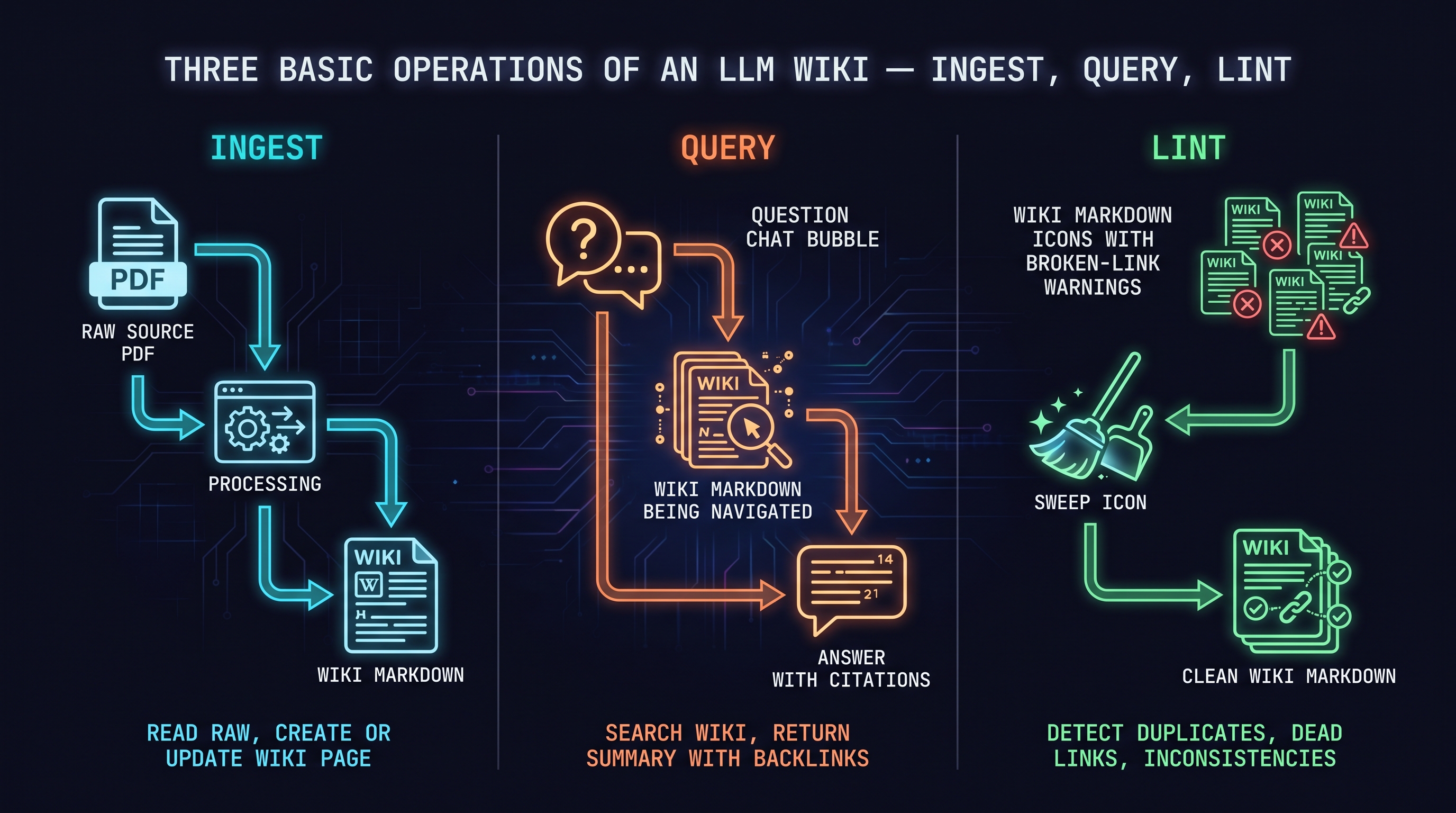

Three basic operations:

- ingest: add a raw source, create/update wiki pages

- query: navigate the wiki to answer questions

- lint: clean up broken links, duplicates, inconsistencies

10.3 How a Pattern Adopts a Community

After Karpathy's gist, the community response was rapid:

- [Joshi, 2026]: "How I built my personal LLM Wiki in a weekend"

- [Paige, 2026]: Obsidian second brain + Claude Code integration

- [Maker, 2026]: "How I took Karpathy's LLM Wiki and built an AI-powered second brain"

- [Starmorph, 2026]: Step-by-step installation guide

Nate Herk's video "Karpathy 10x'ed Claude Code" [Herk, 2026] explains the spread best: "LLM Wiki is a 10x tool — it converts a one-shot chat tool into a compounding personal knowledge engine." (YouTube ID: 20d5cSkSvcU, uploaded 2026-04-05 by Nate Herk; fact-checker confirmed this is the correct original upload, not the re-upload)

10.4 The Counter-Argument: You Need a Knowledge Graph

As the community adopted LLM Wiki, limitations emerged. infranodusllmwiki2026's critique:

"Karpathy's LLM Wiki is flat-file. It can't express relationships between entities. 'A cited B', 'C is a follow-on to D' — relational knowledge is hard to express in markdown files. A knowledge graph layer is needed."

This is a valid critique. But it should be understood in context: Karpathy's pattern is not "the perfect knowledge management system" — it's a good starting point that works well with LLMs. For use cases requiring complex relational knowledge, adding a knowledge graph layer is worth considering.

10.5 "Farzapedia" — The Personalization Argument

Karpathy introduced the concept [Karpathy, 2026]: your own Wikipedia. Your interests, papers you've read, projects you've worked on — all defined from your perspective. Instead of everyone sharing the same Wikipedia, each person has a knowledge base optimized for their own context.

This is the theme of Chapter 11: connecting this pattern to your own activity.

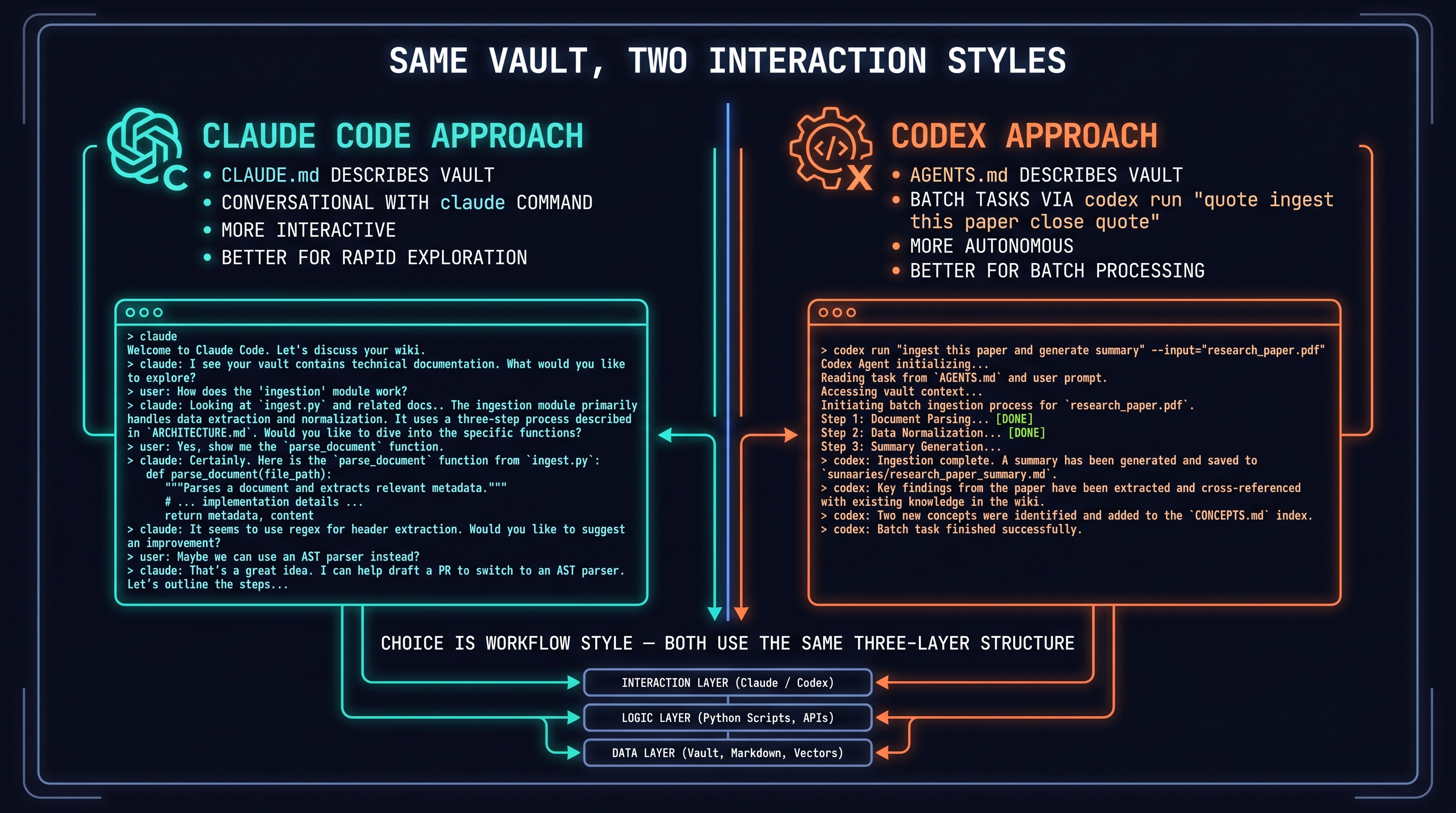

10.6 Claude Code vs Codex for LLM Wiki

Karpathy's original implementation used Claude Code + Obsidian. Codex works the same way:

Claude Code approach: Describe the vault structure in CLAUDE.md; use claude conversationally to update the wiki. More interactive, better for rapid exploration.

Codex approach: Describe the vault structure in AGENTS.md; run tasks via codex exec "ingest this paper". More autonomous, better for batch processing.

Both use the same three-layer structure. The choice is a matter of working style.

References

- Karpathy, Andrej, "LLM Wiki," gist, 2026. [Karpathy, 2026]

- Karpathy, Andrej, "LLM Wiki tweet," Twitter, 2026. [Karpathy, 2026]

- Karpathy, Andrej, "LLM Wiki talk / post," 2026. [Karpathy, 2026]

- Karpathy, Andrej, "Farzapedia — personalization argument," 2026. [Karpathy, 2026]

- Joshi, "How I built my personal LLM Wiki," Medium, 2026. [Joshi, 2026]

- Paige, "Second brain setup," 2026. [Paige, 2026]

- Aimaker, "AI-powered second brain from LLM Wiki," 2026. [Maker, 2026]

- Starmorph, "LLM Wiki step-by-step guide," 2026. [Starmorph, 2026]

- Mindstudio, "Karpathy Wiki implementation," 2026. [MindStudio, 2026]

- Herk, Nate, "Karpathy 10x'ed Claude Code," YouTube (ID: 20d5cSkSvcU), 2026-04-05. [Herk, 2026]

- Infranodus, "LLM Wiki needs a knowledge graph," 2026. [infranodusllmwiki2026]

- LLM Wiki Full Setup, "Canonical demo," 2026. [llmwikifullsetup2026]