Chapter 9: Advanced Multi-Agent Codex — Dependency Graphs and Meta-Harness

9.1 The Self-Modifying Harness

Chapter 6 covered declaring dependency graphs and letting agents autonomously execute tasks. Now one step further: what if the agents themselves improve the harness they're following?

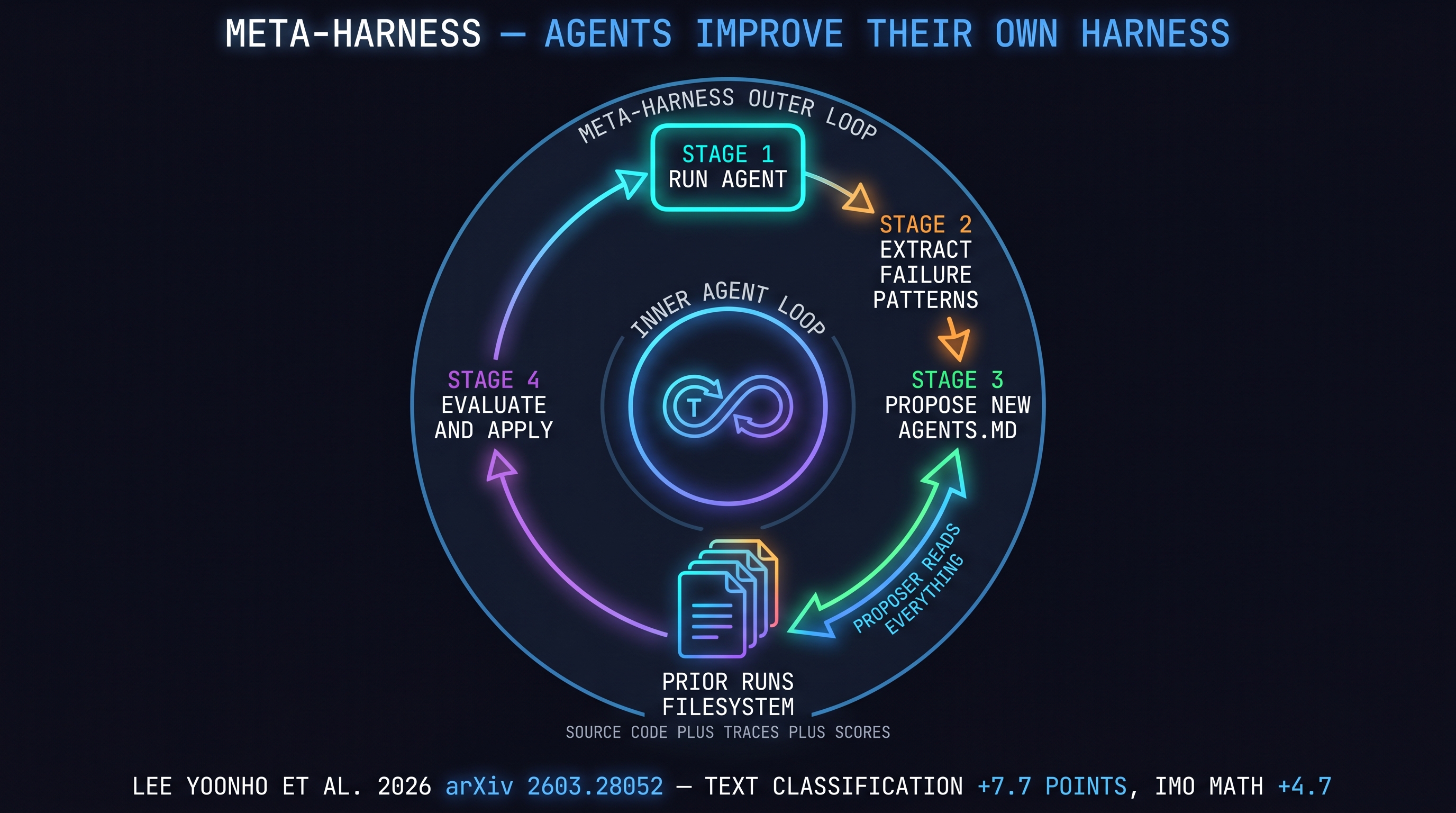

This is the core idea of the Meta-Harness paper (arXiv 2603.28052) [Um, 2026]. A proposer agent reads all prior harness candidates' source code, execution traces, and scores from the filesystem, then proposes a new harness. This loops. Results:

- Text classification: +7.7 points over SOTA, 4x fewer tokens

- IMO math: +4.7 points average across 5 models

- TerminalBench-2: exceeds best hand-designed baselines

The harness optimizes itself.

9.2 Dependency Graphs — In Practice

Let's implement the Chapter 6 DAG concept with real code using Claude Code's Agent Teams API [Anthropic, 2026]:

const { TeamCreate, TaskCreate } = require('@anthropic/claude-code');

// Create a team

const team = await TeamCreate({

members: ['architect', 'implementer', 'tester', 'reviewer']

});

// Declare task dependency graph

const tasks = [

TaskCreate({ id: 't1', name: 'design-api', assignee: 'architect' }),

TaskCreate({ id: 't2', name: 'implement-core', assignee: 'implementer',

blockedBy: ['t1'] }),

TaskCreate({ id: 't3', name: 'write-tests', assignee: 'tester',

blockedBy: ['t2'] }),

TaskCreate({ id: 't4', name: 'review-all', assignee: 'reviewer',

blockedBy: ['t2', 't3'] }),

];

// Agents autonomously claim leaf tasks and execute

await team.execute(tasks);

Agents start with leaf tasks (no pending dependencies). When t1 completes, t2 unlocks. When both t2 and t3 complete, t4 unlocks.

Observability: Using hooks as described in [IndyDevDan, 2026], log task start/completion to track the full graph execution .

9.3 Meta-Harness — Self-Optimization

A meta-harness is an outer loop where agents improve the harness (AGENTS.md / SKILL.md / config.toml) they follow. Two approaches:

Approach 1: GEPA-Style Feedback Loop

From [Jagtap, 2026]'s GEPA (Generalized Evolutionary Prompt Automation):

- Run agent → collect results

- Extract failure patterns (which AGENTS.md instructions were ignored?)

- Propose new rules for AGENTS.md

- Apply to next run → repeat

# Meta-harness outer loop (pseudocode)

while True:

results = run_agent(current_agents_md, tasks)

failures = extract_failures(results)

new_agents_md = proposer_agent(

current_agents_md=current_agents_md,

prior_runs=filesystem.read_all_runs(),

failures=failures

)

if evaluate(new_agents_md) > evaluate(current_agents_md):

current_agents_md = new_agents_md

Approach 2: Filesystem-Based Experience Accumulation

The key insight from the Meta-Harness paper [Um, 2026]: don't compress feedback — preserve it in full. Store source code + traces + scores from every prior run in the filesystem; the proposer agent reads all of it. When you summarize feedback, you lose "why this strategy failed."

The implication for harness design: CLAUDE.md / AGENTS.md is more effective when managed as a "living document that evolves with execution history" rather than a "static file of the current version."

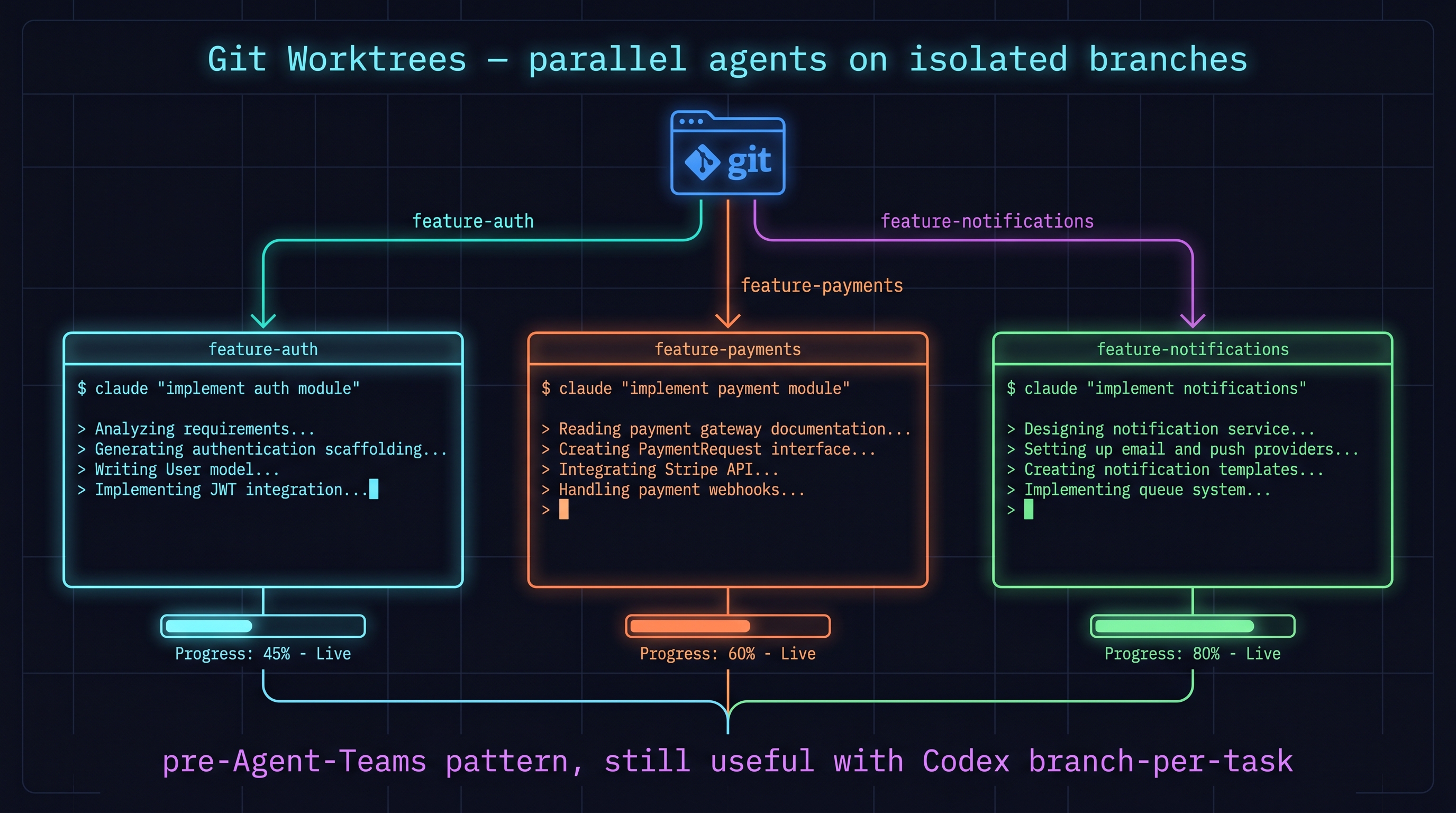

9.4 Git Worktrees — Parallelization Before Agent Teams

Documented in [IndyDevDan, 2026] — how to achieve parallelization via git worktrees before the Agent Teams API existed:

git worktree add ../feature-auth feature/auth

git worktree add ../feature-payments feature/payments

git worktree add ../feature-notifications feature/notifications

cd ../feature-auth && claude "implement auth module"

cd ../feature-payments && claude "implement payment module"

cd ../feature-notifications && claude "implement notifications"

This pattern was an important bridge before official parallelization. It still works alongside Codex's branch-per-task.

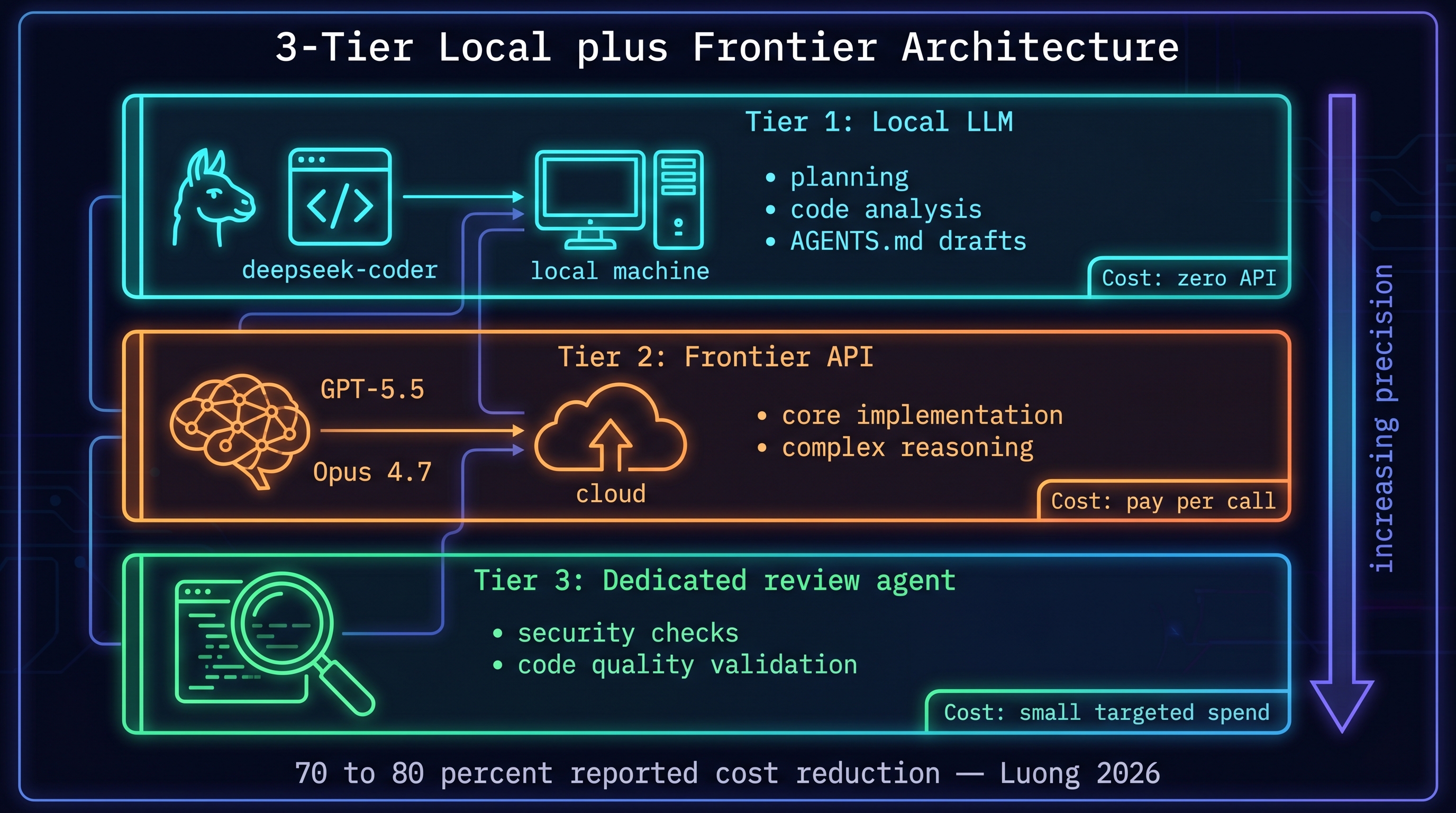

9.5 Three-Tier Local Model Architecture

From [NGUYEN, 2026]'s production architecture:

[Tier 1: Local LLM (llama/deepseek-coder)]

↓ planning, code analysis, AGENTS.md drafts

[Tier 2: Frontier API (GPT-5.5 / Opus 4.7)]

↓ core implementation, complex reasoning

[Tier 3: Dedicated Review Agent]

↓ security checks, code quality validation

Tier 1 runs locally — no API cost. Frontier API only for Tier 2. Reported 70-80% cost reduction overall.

In meta-harness context: Tier 1 local model rapidly experiments with AGENTS.md improvements; Tier 2 frontier evaluates. Experiment cost drops dramatically.

9.6 Bridge to Chapter 12 — Toward Autonomous Research

Karpathy's autoresearch experiment [Karpathy, 2026] — 700 experiments optimizing GPT-2 training in 2 days — is this chapter's meta-harness applied to a research domain. The agent designs experiments, runs them, analyzes results, proposes next experiments. The harness becomes the research loop itself.

This is the starting point of the AI Scientist covered in Chapter 12.

References

- Anthropic, "Agent Teams," 2026. [Anthropic, 2026]

- Lee, Yoonho et al., "Meta-Harness: End-to-End Optimization of Model Harnesses," arXiv 2603.28052, 2026. [Um, 2026]

- B327Roy, "Multi-agent retrospective," 2026. [brunch), 2026]

- Jagtap, "Codex AGENTS.md auto-optimization (GEPA)," 2026. [Jagtap, 2026]

- Fulton, Alex, "Inside the agent harness," 2026. [Fulton, 2026]

- Luong, "Local LLMs with frontier — 3-tier," 2026. [NGUYEN, 2026]

- IndyDevDan, "Claude Code hooks for multi-agent observability," 2026. [IndyDevDan, 2026]

- IndyDevDan, "Pre-Agent-Teams parallelization with git worktrees," 2026. [IndyDevDan, 2026]

- Karpathy, Andrej, "Autoresearch," 2026. [Karpathy, 2026]

- Intuition, "Codex as superapp — multi-agent," 2026. [IntuitionLabs, 2026]