Chapter 8: After GPT-5.5 — Patterns the Community Discovered

8.1 The Arc: April 16 → April 23 → First Month

Chapter 7 ended at April 23 with GPT-5.5 landing in Codex. But to understand why the community reacted the way it did, you have to start at April 16 — not April 23.

When Opus 4.7 shipped on April 16 with its "fewer subagents by default" change, it didn't just change a capability — it created the ambiguity tax. Seven days of developers discovering that prompts that worked on 4.6 now required explicit instructions. Seven days of frustration piling up in r/ClaudeAI, HN threads, and X posts [MerchMind AI, 2026; Xlork Blog, 2026].

Then April 23 arrives, GPT-5.5 ships in Codex, and the headline from the 500+ Reddit comment synthesis [contributor, 2026] reads: "Claude Code is higher quality but unusable. Codex is slightly lower quality but actually usable."

That word "unusable" wasn't born on April 23. It was seeded on April 16.

As far back as September 2025, when Codex adoption first accelerated, Sam Altman had expressed the same concern [News, 2025]: "The Codex-favorable Reddit sentiment feels very fake."

Both observations can be simultaneously true. The ambiguity tax frustration was real and organic; some of the Codex enthusiasm may have been amplified. This chapter holds both views and focuses on practical patterns with independent corroboration — not Reddit votes.

A note on sources. This chapter draws on Reddit threads, HN discussions, and X posts from the April–May 2026 window. These sources are used with eyes open. Sam Altman flagged in September 2025 that Codex-favorable Reddit sentiment "feels very fake" [Altman, 2025] — and that concern did not disappear with the GPT-5.5 launch. Organizationally amplified voices may be present in the corpus. Every recipe below is backed by at least one independent experiment or multi-source convergence, not by Reddit vote counts alone. Where a source is community sentiment only, it is labeled as such.

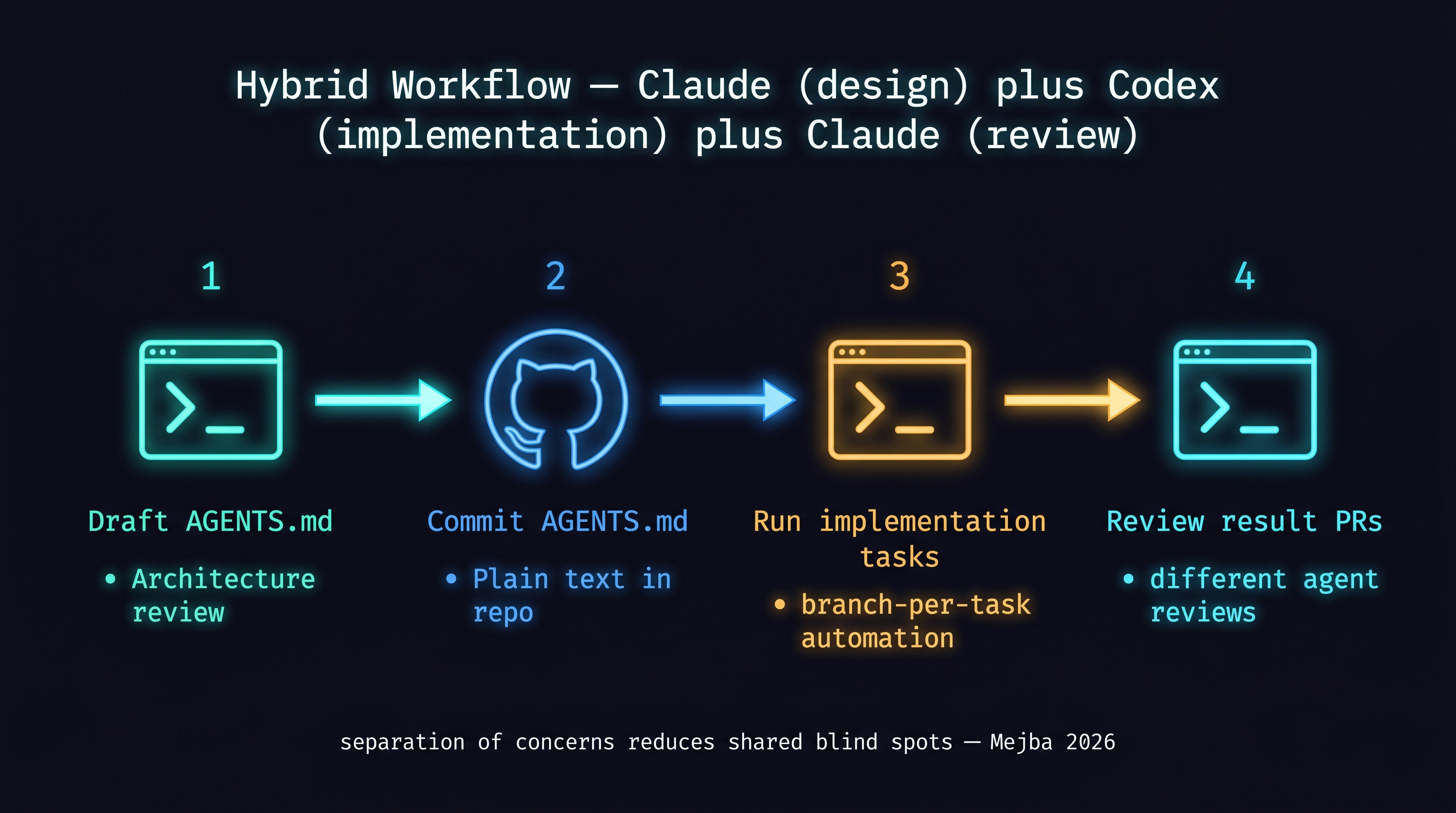

8.2 Recipe 1: Hybrid Use — Claude (Design + Harness) + Codex (Implementation + Verification)

The most common pattern isn't "switching" — it's role separation.

- Claude Code: design, harness authoring (CLAUDE.md / AGENTS.md drafts), architecture review

- Codex: implementation, refactoring, test generation, autonomous long-running tasks

Mejba Ahmed's experiment [Ahmed, 2026] is the extreme version: Codex as a subagent inside Claude Code. Addy Osmani's "Code Orchestra" [Osmani, 2026] extends this to multi-model routing — cheap models for planning, frontier for implementation, dedicated model for security review.

How to apply:

- Use Claude Code to draft

AGENTS.md - Commit

AGENTS.mdto the repository - Run implementation tasks with Codex

- Review result PRs with Claude Code

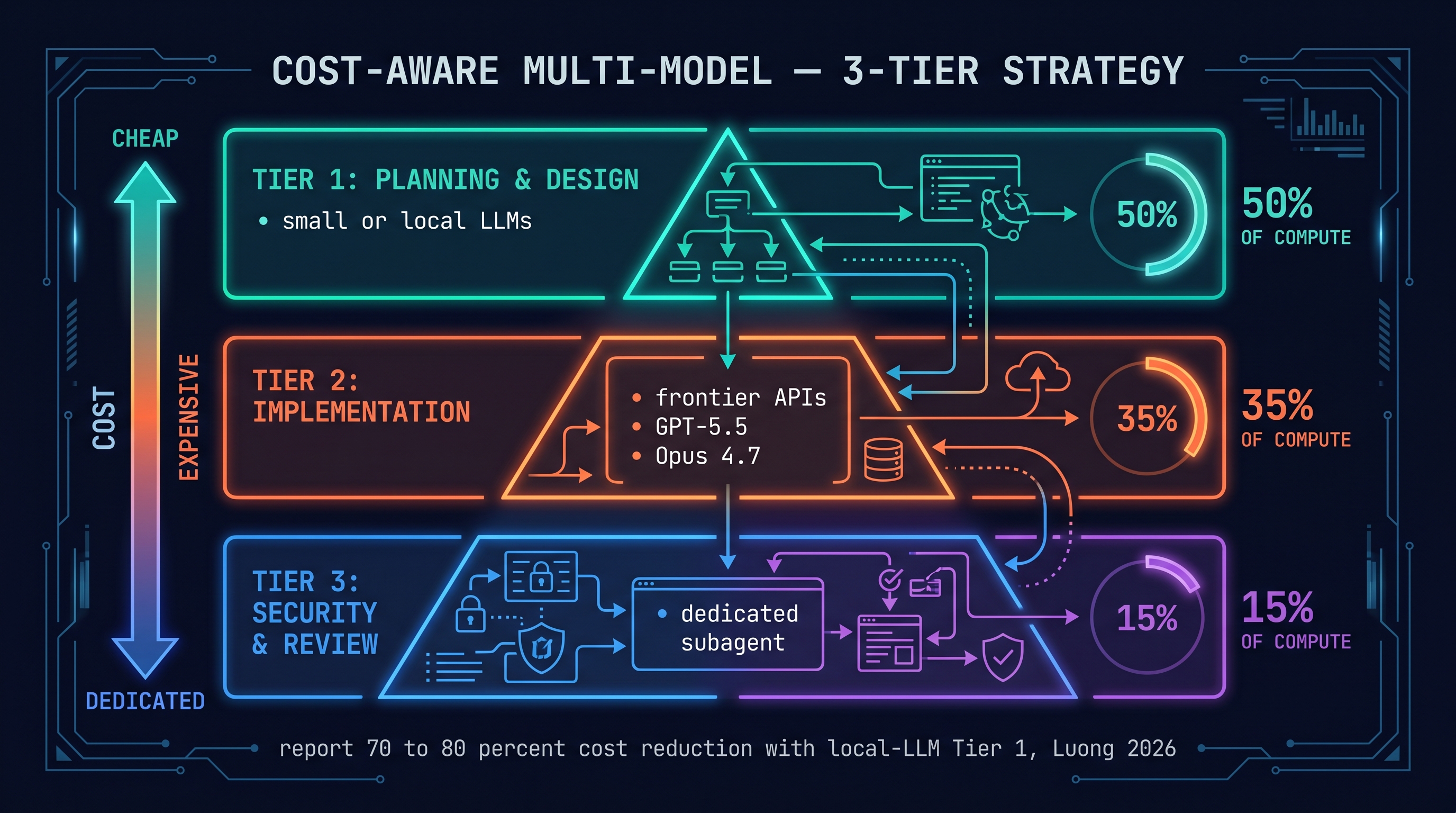

8.3 Recipe 2: Cost-Aware Multi-Model

Using frontier models for everything is wasteful. The 3-tier strategy the community converged on:

Tier 1 (planning, design): cheaper/faster model or lower effort

Tier 2 (implementation): frontier model (GPT-5.5, Opus 4.7)

Tier 3 (security, review): subagent with dedicated task instructions

Luong's "Local LLMs with Frontier" guide [NGUYEN, 2026] takes the more aggressive version: local LLMs (llama, deepseek-coder) for Tier 1, API only for Tier 2. Reports of 70-80% cost reduction.

Osmani's approach [Osmani, 2026]: route the same task through three models, merge only when confidence is high.

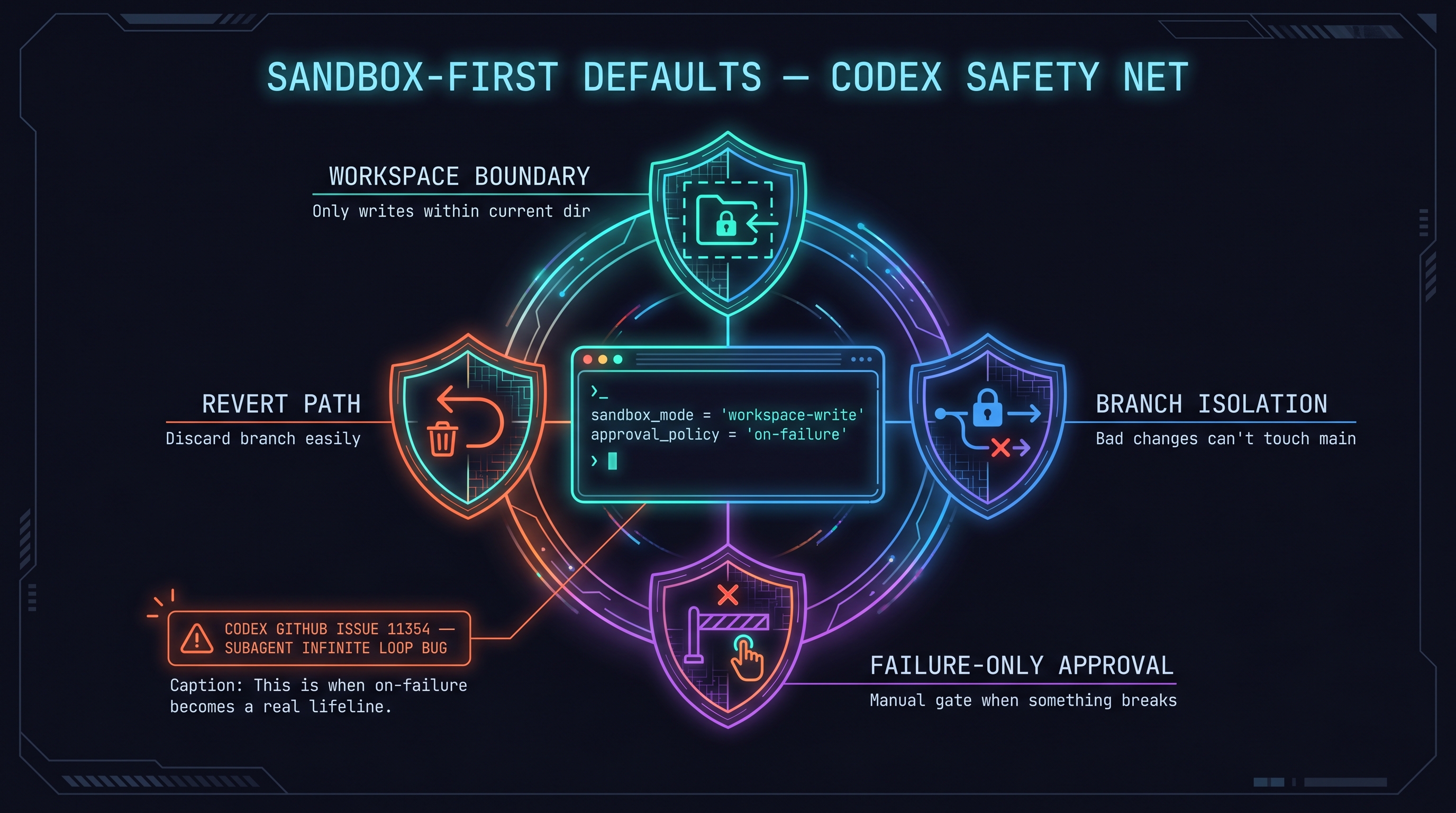

8.4 Recipe 3: Sandbox-First Defaults

The most-shared configuration in the first month after launch [Proser, 2026]:

sandbox_mode = "workspace-write"

approval_policy = "on-request"

Branch-per-task is the default safety net. Each task runs on an independent branch, so bad changes don't touch main.

Real failure case: Codex GitHub issue #11354 [contributors, 2026]. A subagent entered an infinite loop under specific conditions. Two things this tells you: (1) Codex's subagent system is still early-stage; (2) approval_policy = "on-request" is a real lifeline in these cases.

8.5 Recipe 4: Don't Trust Your Own Benchmarks

MorphLLM's results [MorphLLM, 2026]:

- Terminal-Bench: Codex 77.3 > Claude Code 65.4

- Blind review preference: Claude Code 67% > Codex 25%

The tool that wins the benchmark doesn't necessarily produce the output people prefer. Benchmarks you haven't run on your own workload are for reference only.

Practical method: pick 3-5 tasks you actually do, run them through both tools, compare the outputs yourself. Your own-context preference is more accurate than any published benchmark for your use case.

8.6 Codex Desktop and Computer Use

Codex Desktop [Science, 2026]:

- Computer Use integration

- 90+ plugins

- Terminal + browser + IDE in a single interface

Still experimental in some areas, but it points toward the direction where Computer Use plays a role in multi-agent orchestration.

8.7 Methodological Honesty: Taking Altman's Warning Seriously

Sam Altman's "feels very fake" comment [News, 2025] isn't just competitive jab. It's a methodological warning about using community sentiment as a source.

The "community reactions" cited in this book from Reddit / HN / Medium are selective samples. Organizationally amplified voices may be present. The recipes in this chapter include only those backed by independent experiments ([Ahmed, 2026]) or convergence across multiple independent sources ([Osmani, 2026], [NGUYEN, 2026]) — not just "the community said so."

References

- Dev.to, "Claude Code Reddit 500+ comments synthesis," 2026. [contributor, 2026]

- Altman, Sam, "Fake bots on Reddit," X, 2025-09-08. [News, 2025]

- MorphLLM, "Codex vs Claude Code Benchmark," 2026. [MorphLLM, 2026]

- Mejba Ahmed, "I ran Codex inside Claude Code," 2026. [Ahmed, 2026]

- Osmani, Addy, "Code orchestra — multi-model routing," 2026. [Osmani, 2026]

- Luong, "Local LLMs with frontier models — 3-tier setup," 2026. [NGUYEN, 2026]

- Zack Proser, "Codex daily-use review," 2026. [Proser, 2026]

- GitHub, "Codex subagents issue #11354," 2026. [contributors, 2026]

- LetsDS, "Codex Desktop — 90+ plugins," 2026. [Science, 2026]

- Matthew Berman, "GPT-5.5 two-week prerelease review," 2026. [Berman, 2026]

- LLM Stats, "GPT-5.5 vs Opus 4.7," 2026. [Stats, 2026]

- Intuition, "Codex as superapp," 2026. [IntuitionLabs, 2026]

- MerchMind, "Claude Opus 4.7 Backlash Explained," 2026. [AI, 2026]

- Xlork, "Why Developers Are Frustrated with Opus 4.7," 2026. [Blog, 2026]