Chapter 7: Model Release Timeline — Sonnet 4.6 → Opus 4.7 → GPT-5.5

7.1 Sixty-Seven Days

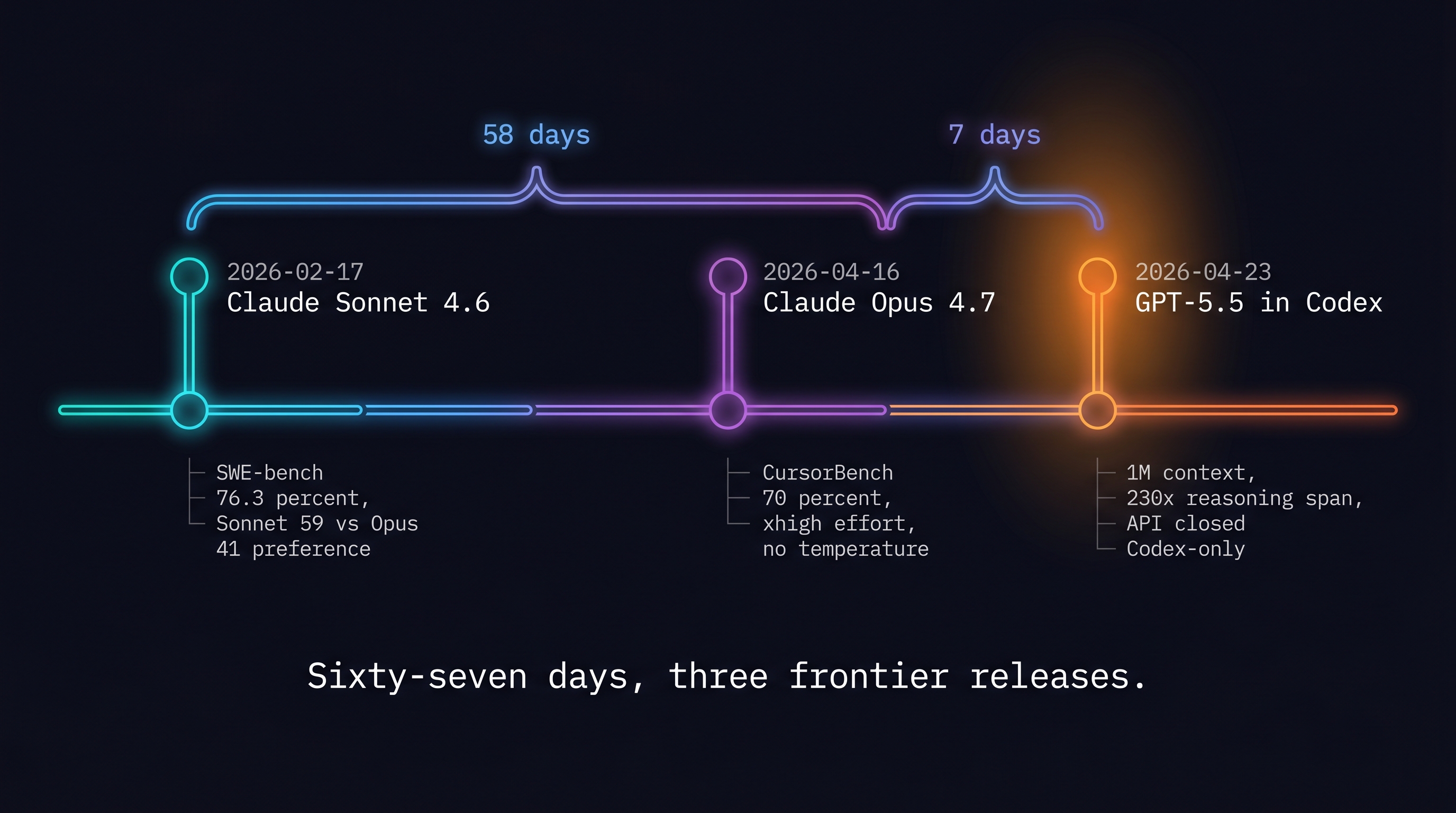

February 17, 2026 to April 23, 2026 is 67 days. Three frontier model releases landed inside that window:

- 2026-02-17: Claude Sonnet 4.6 (Anthropic)

- 2026-04-16: Claude Opus 4.7 (Anthropic)

- 2026-04-23: GPT-5.5 (OpenAI)

Anthropic's Sonnet-to-Opus gap: 58 days. OpenAI answered with GPT-5.5 just 7 days after Opus 4.7.

Two conclusions emerge from this sequence:

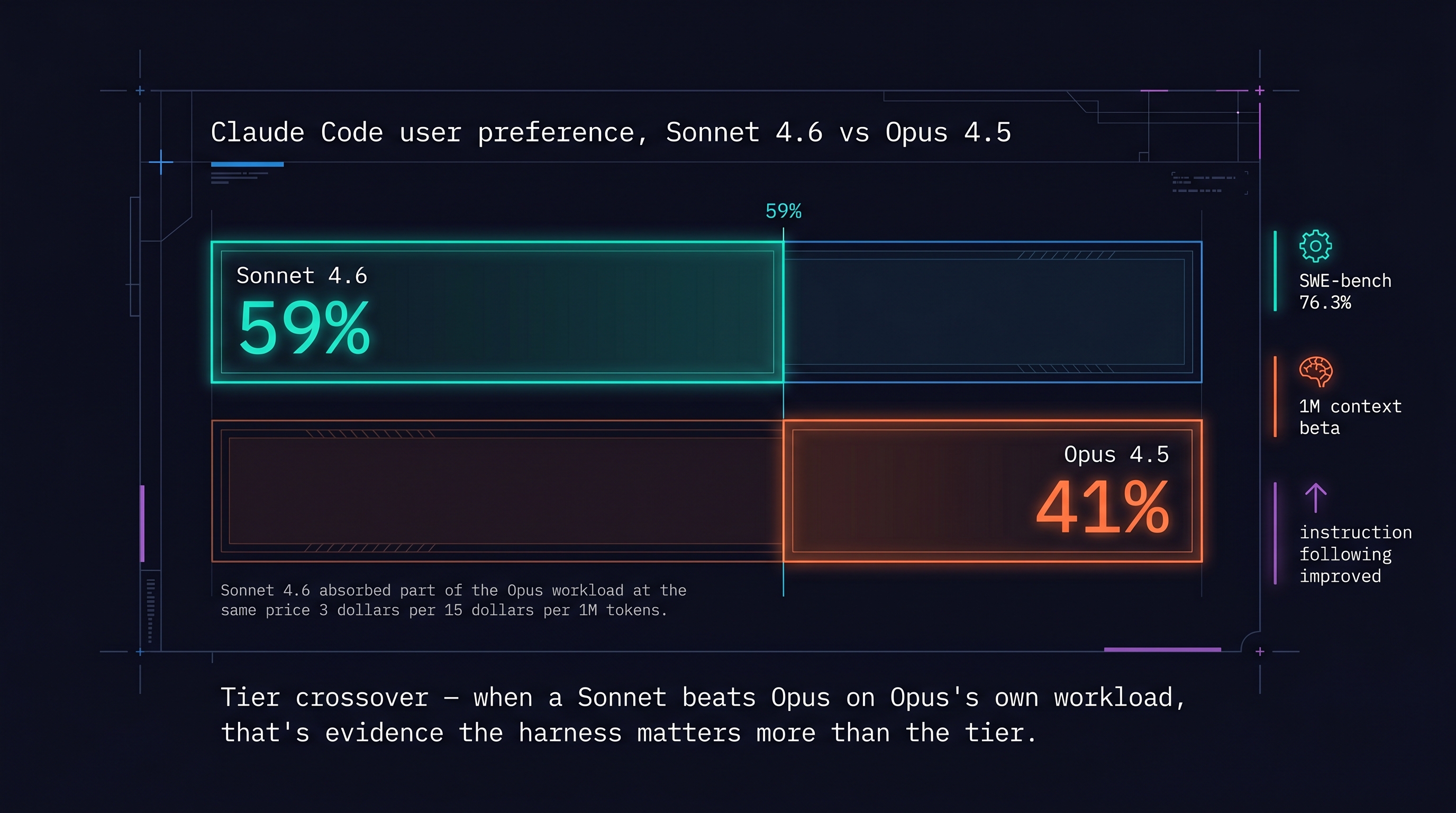

- Frontier models are no longer the primary differentiator. When Sonnet 4.6 was preferred over Opus 4.5 at a 59:41 ratio, it was evidence that most of the difference users perceive comes from the harness, not the model tier.

- Claude vs Codex is an interface comparison, not a model comparison. This chapter's 67 days are the period that forced that choice.

7.2 Claude Sonnet 4.6 — Before / After (2026-02-17)

Before: The landscape was Opus 4.5 + Sonnet 4.5. Claude Code had settled into its five-pattern stack: CLAUDE.md / subagents / hooks / skills / plugins. On the Codex side, AGENTS.md had been adopted by 60,000+ projects and moved under the Linux Foundation's Agentic AI Foundation.

After: The headline wasn't the model — it was the preference ratio. Claude Code users preferred Sonnet 4.6 over Opus 4.5 at 59:41. Sonnet absorbed part of the Opus workload at the same price ($3/$15 per 1M). SWE-bench Verified: 79.6% (10-trial average; 80.2% with prompt modification) [Anthropic, 2026].

User reports: "Reads and modifies context more effectively," "Consolidates common logic instead of duplicating," "Fewer false success claims."

Primary citations:

- Anthropic, "Introducing Claude Sonnet 4.6," 2026-02-17 [Anthropic, 2026]

- Axios, 2026-02-17 [Axios, 2026]

- TechCrunch, 2026-02-17 [TechCrunch, 2026]

Key implication: A Sonnet model absorbing Opus workload is evidence that 80%+ of the difference users see is from how you use the model — the harness — not the model tier.

7.3 Claude Opus 4.7 — Before / After (2026-04-16)

Before: Sonnet 4.6 had become the default, but Opus 4.6 remained strong. "Opus 4.6 + Claude Code" was the standard frontier coder combination. Anthropic had just published Harnessing Claude's Intelligence with the three harness patterns. The AAR (9-instance autonomous alignment research over 5 days) had been announced two days before.

After: Opus 4.7's key changes:

- CursorBench 70% (up from Opus 4.6's 58%) [Anthropic, 2026]

xhigheffort + task budgets: external signals telling the model how long to reason- Sampling parameters (temperature, top_p, top_k) removed: "steer behavior through prompting"

- Same-day GA across Cursor / GitHub Copilot / AWS Bedrock / Vertex AI [Amazon Web Services, 2026] [GitHub, 2026]

Beat 1 — Anthropic racing against itself. On the same day Opus 4.7 launched, CNBC reported that Anthropic had privately acknowledged its unreleased "Mythos" model outperforms Opus 4.7 [CNBC, 2026]. Shipping Opus 4.7 knowing Mythos is already ahead is a precise signal: the cadence of release is now set by competitive pressure, not by "the model is ready." Anthropic was racing against its own roadmap to keep frontier tooling in developers' hands. Simultaneously, they issued an apology for the Claude "dumbed down" reports — instruction following strengthened, fewer subagents spawned by default, fewer emoji.

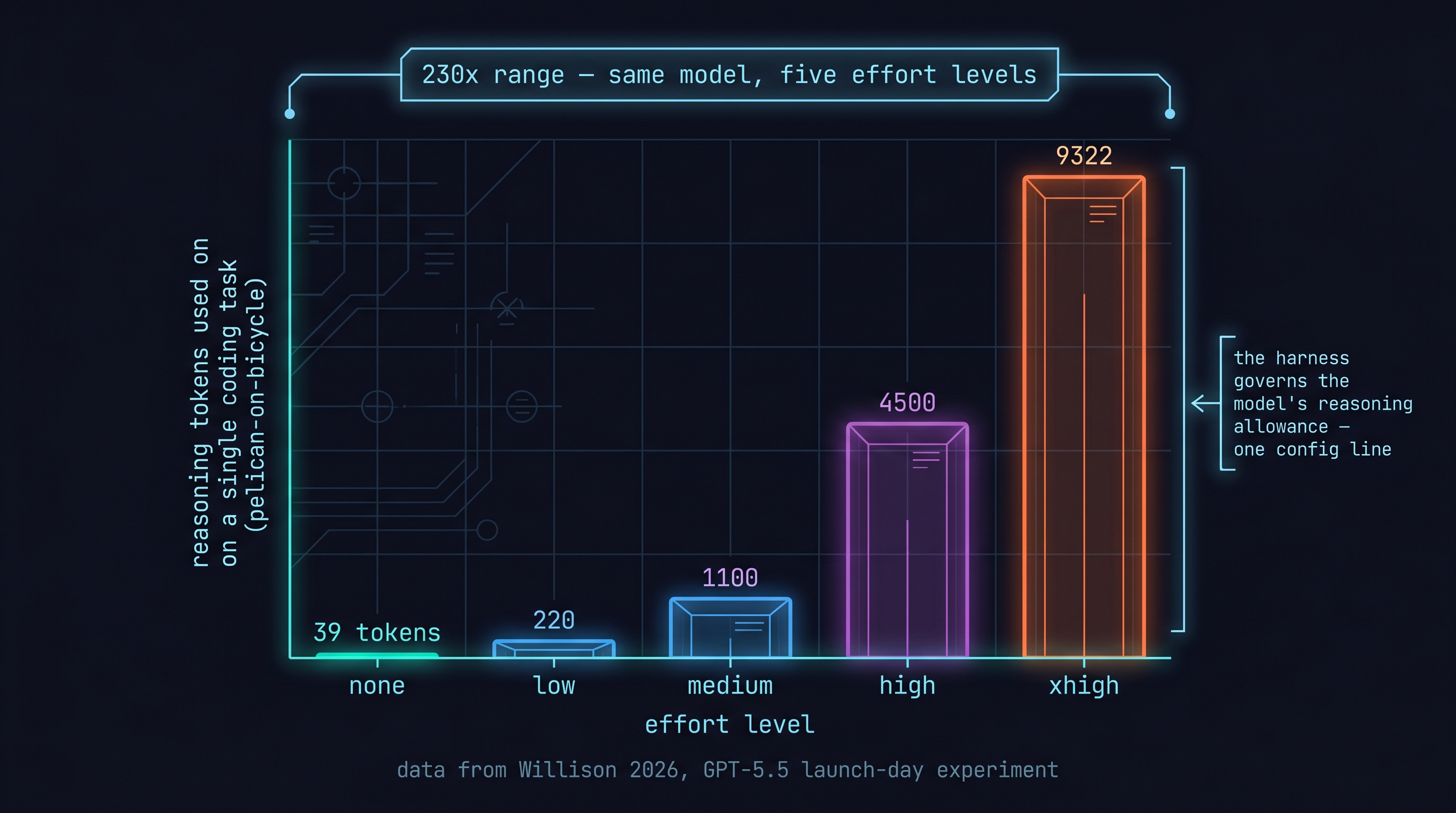

In Claude Code: /ultrareview added, default effort raised to xhigh. The harness was now directly governing the model's "reasoning allowance."

The Ambiguity Tax: April 16–22

The "fewer subagents by default" change — intended as a fix — introduced a fracture in the user base. Opus 4.6 had rescued vague prompts: if your instruction was ambiguous, the model would still attempt something useful. Opus 4.7 with instruction-following hardened stopped rescuing vague prompts and instead surfaced the ambiguity back to the user [KeepMyPrompts, 2026].

Developers who had built their workflows on Opus 4.6's lenient interpretation now had a week to either rewrite their prompts or absorb the regression. Most couldn't do both. The community named this the "ambiguity tax": the cost of explicit instructions you hadn't previously had to write [MerchMind AI, 2026].

The reaction split sharply: developers with well-structured AGENTS.md and explicit task descriptions saw capability gains. Developers with prompt-as-memory workflows saw regression [Xlork Blog, 2026]. X and Reddit threads captured both sides simultaneously [Botmonster Tech, 2026].

This 7-day window — April 16 to April 22 — is the load-bearing context for what happened next. Developers who had been nursing Opus 4.7 frustration were not a neutral audience when GPT-5.5 arrived on April 23. They were ready-made migration candidates.

Beat 1b — The launch did not land cleanly. The community reaction was not merely anecdotal. The backlash post on X reached 14,000 likes and the corresponding Reddit thread hit 2,300 upvotes [MerchMind AI, 2026; Botmonster Tech, 2026] — numbers large enough to leave an archive footprint. These are concrete signals, not forum noise: a significant slice of Claude Code's active user base registered public displeasure within 48 hours of launch. The developer who broke their prompt library on April 16 and hadn't fixed it by April 22 is the exact person who clicked through the GPT-5.5 announcement on April 23. (Chapter 8 opens from this person's perspective.)

Primary citations:

- Anthropic, "Introducing Claude Opus 4.7," 2026-04-16 [Anthropic, 2026]

- CNBC, "Anthropic Opus 4.7, less risky than Mythos," 2026-04-16 [CNBC, 2026]

- GitHub Copilot Changelog, 2026-04-16 [GitHub, 2026]

- keepmyprompts, "Claude Opus 4.7 Prompting Guide: What Changed," 2026. [KeepMyPrompts, 2026]

- MerchMind, "Claude Opus 4.7 Backlash Explained," 2026. [MerchMind AI, 2026]

- Xlork, "Claude Opus 4.7: What's New and Why Developers Are Frustrated," 2026. [Xlork Blog, 2026]

- BotMonster, "Claude Opus 4.7: What X and Reddit Users Are Saying," 2026. [Botmonster Tech, 2026]

7.4 GPT-5.5 — Before / After (2026-04-23)

Before: GPT-5.4 was the ChatGPT default; coding-specific work used GPT-5.2-Codex. Codex CLI was rapidly standardizing around AGENTS.md + ~/.codex/config.toml + TOML skills and subagents. Anthropic had released Opus 4.7 one week earlier — and 7 days of ambiguity-tax discourse had left a meaningful slice of Claude Code's user base frustrated and prompt-library-less. When GPT-5.5 arrived, it didn't land in a neutral market. It landed in a community already primed to reconsider [Danushka, 2026].

Beat 2 — Codex as primary delivery channel. GPT-5.5's most important characteristic wasn't a benchmark:

- API was closed on launch day — only accessible through a Codex subscription [Willison, 2026]. Willison routing through the Codex plugin wasn't a workaround; it was OpenAI's explicit statement that the harness is the primary delivery channel. When the frontier model ships into the harness before the API, the harness has stopped being a wrapper around the model — it has become the product.

- Context 1,050,000 tokens / 128k output / cutoff 2025-12-01

- Five effort levels (none/low/medium/high/xhigh)

- Pricing: $5/$30 per 1M — exactly 2x GPT-5.4 (a deliberate confidence signal)

- NVIDIA GB200 NVL72 infrastructure: 35x lower token cost, 50x higher throughput per MW [NVIDIA, 2026]

Willison's xhigh experiment: pelican-on-bicycle coding task — 9,322 reasoning tokens at xhigh, 39 tokens at none [Willison, 2026]. A ~240x difference (9,322 / 39 ≈ 239).

NVIDIA internal: "Debugging cycles that stretched days are closing in hours; experimentation that required weeks is turning into overnight progress" [NVIDIA, 2026].

Primary citations:

- OpenAI, "Introducing GPT-5.5," 2026-04-23 [OpenAI, 2026]

- Willison, Simon, 2026-04-23 [Willison, 2026]

- NVIDIA Blog, 2026-04-23 [NVIDIA, 2026]

- TechCrunch, 2026-04-23 [TechCrunch, 2026]

7.5 Two Conclusions from 67 Days

Conclusion 1: Model tier is no longer the primary differentiator.

February's preference data (Sonnet absorbing Opus workloads), April's instruction-following changes (tone shift, not capability jump), and April 23's Codex-first launch all point the same direction: the harness determines most of the outcome, even when models are equal.

Conclusion 2: Claude vs Codex is an interface comparison.

Both tools upgraded their models in 67 days. The more important change was interface differentiation — AGENTS.md standard + ~/.codex/config.toml + TOML skills vs CLAUDE.md hierarchy + auto-memory + agent teams. The two April frontier releases turned this interface choice into something you have to decide now.

References

- Anthropic, "Introducing Claude Sonnet 4.6," 2026-02-17. [Anthropic, 2026]

- Anthropic, "Introducing Claude Opus 4.7," 2026-04-16. [Anthropic, 2026]

- OpenAI, "Introducing GPT-5.5," 2026-04-23. [OpenAI, 2026]

- Axios, "Anthropic Claude Sonnet 4.6," 2026-02-17. [Axios, 2026]

- TechCrunch, "Anthropic releases Sonnet 4.6," 2026-02-17. [TechCrunch, 2026]

- CNBC, "Anthropic Opus 4.7, less risky than Mythos," 2026-04-16. [CNBC, 2026]

- GitHub Copilot Changelog, 2026-04-16. [GitHub, 2026]

- AWS Bedrock, "Claude Opus 4.7," 2026-04-16. [Amazon Web Services, 2026]

- NVIDIA Blog, "OpenAI Codex GPT-5.5," 2026-04-23. [NVIDIA, 2026]

- Willison, Simon, "GPT-5.5," simonwillison.net, 2026-04-23. [Willison, 2026]

- TechCrunch, "OpenAI GPT-5.5," 2026-04-23. [TechCrunch, 2026]

- keepmyprompts, "Claude Opus 4.7 Prompting Guide: What Changed," 2026. [KeepMyPrompts, 2026]

- MerchMind, "Claude Opus 4.7 Backlash Explained," 2026. [MerchMind AI, 2026]

- Xlork, "Why Developers Are Frustrated with Opus 4.7," 2026. [Xlork Blog, 2026]

- BotMonster, "Claude Opus 4.7: What X and Reddit Are Saying," 2026. [Botmonster Tech, 2026]

- Danushka, "OpenAI Just Released GPT-5.5 — This Is the Move Claude Did Not Want to See," 2026. [Danushka, 2026]