Chapter 6: Verifiable Vibe Coding — Splitting Work, Verification, and Review across Agents

6.1 The Bug That Proves the Thesis

Before writing a single line of multi-agent setup, consider Codex GitHub issue #11354 [contributors, 2026]. A user set subagents = false in their config and called /review. Result: Codex's /review command re-enabled subagents, overriding the explicit config setting. The user's stated preference did not govern the model's behavior. The config file was not authoritative.

This is the strongest possible argument for why verifiable vibe coding matters: you cannot always trust what you configured, let alone what a single agent produced and then reviewed itself. When the tool can silently override your explicit settings, independent verification isn't optional — it's the only reliable check.

That's the principle. Here's what it looks like in practice. Mejba Ahmed ran an experiment: Codex as a subagent inside Claude Code [Ahmed, 2026]. The result? "The work separated." Claude Code handled design; Codex handled implementation; Claude Code reviewed the output. No single agent was grading its own work. Blind spots shrank.

This is the chapter's core idea: verifiable vibe coding means splitting work, verification, and review across different agents so no agent grades its own output.

"Vibe coding" — LLM-driven natural-language development [Korean Dev Blog, 2026] — is inherently unverifiable when a single agent does everything. Multi-agent separation makes it verifiable. The config-override bug shows why even the harness itself needs an external check.

6.2 Two Mechanisms

Claude Code: Agent Teams

Anthropic's Agent Teams [Anthropic, 2026] provides:

- Shared task list: all agents see the same tasks; each claims and executes leaf tasks

- File locking: agents acquire locks before editing files — prevents conflicts

- SendMessage: direct peer-to-peer agent messaging

- Event hooks:

TeammateIdle,TaskCreated,TaskCompletedtrigger agent reactions

Tested conclusion: "3-5 teammates / 5-6 tasks per teammate" is optimal [Anthropic, 2026]. "Three focused teammates often outperform five scattered ones."

Codex: TOML Subagents

In Codex, define specialist agents as TOML files in .codex/agents/; an orchestrator agent calls them [OpenAI, 2026]. Simpler than Claude Code's runtime collaboration, but sufficient for work separation.

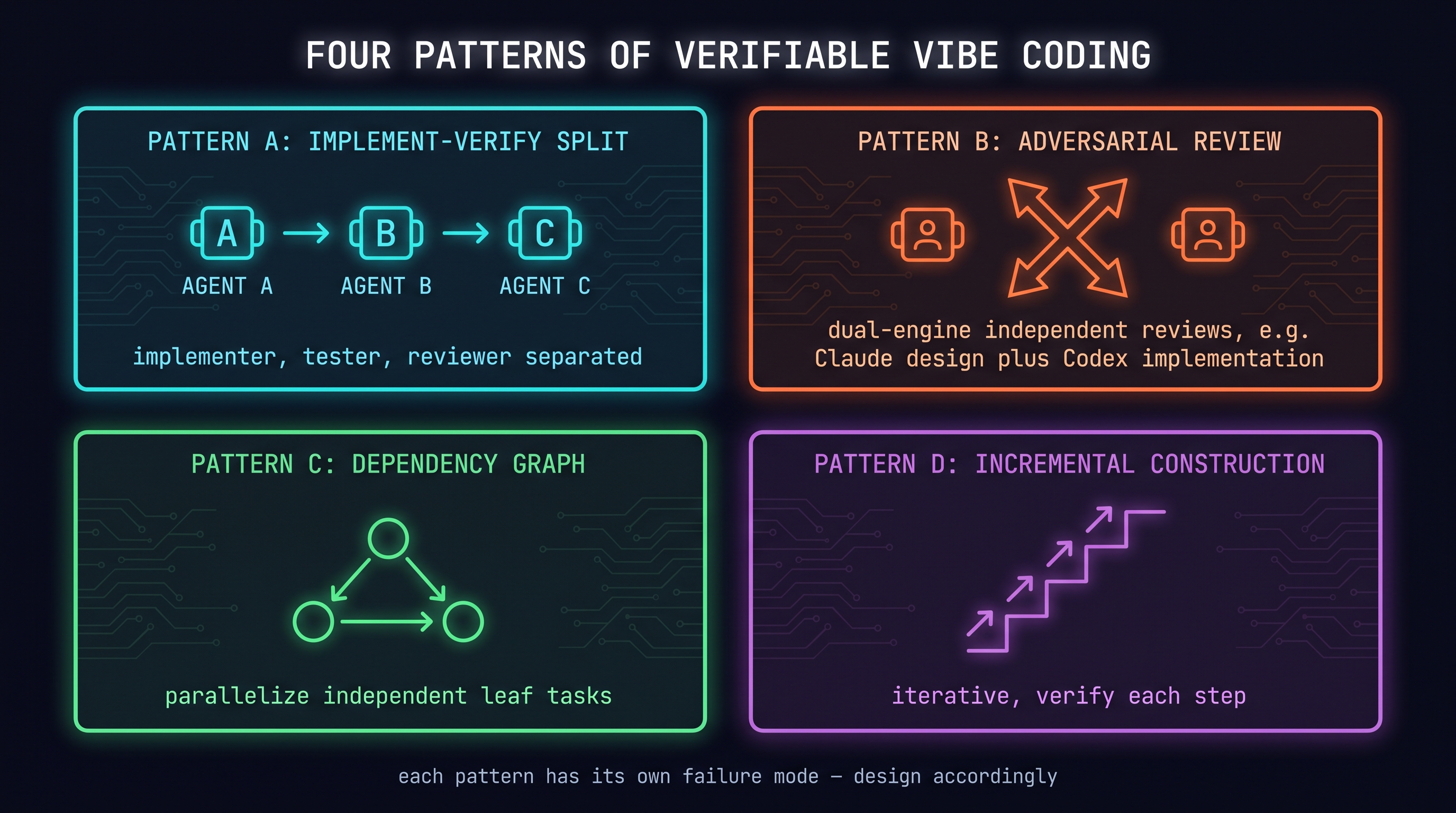

6.3 Four Verifiable Vibe Coding Patterns

Each pattern: (a) when to use it, (b) Claude Code setup, (c) Codex setup, (d) failure mode.

Pattern A: Implement–Verify Split

One agent implements, another writes tests, a third reviews.

When: Implementing new features.

Claude Code setup:

# TaskCreate dependency graph

Task 1: implement-auth-endpoint

Task 2: write-auth-tests [blocked_by: implement-auth-endpoint]

Task 3: review-auth [blocked_by: write-auth-tests]

Codex setup:

# .codex/agents/implementer.toml

name = "implementer"

developer_instructions = "Implement the feature. Write code only. Do not write tests."

# .codex/agents/tester.toml

name = "tester"

developer_instructions = "Write Jest tests for the implemented feature. Use mocks. 80% coverage."

# .codex/agents/reviewer.toml

name = "reviewer"

developer_instructions = "Review code and tests. Check for security issues and edge cases."

Failure mode: If the implementer and tester share the same assumptions, tests become meaningless. Instruct the tester to "write tests from the spec only, without reading the implementation file."

Pattern B: Adversarial Review (Dual-Engine)

Two different models/agents independently review the same code.

When: Critical changes, security-sensitive code.

Claude Code + Codex combination: As in Mejba's experiment [Ahmed, 2026], Claude Code does design and review while Codex does implementation. Two models' blind spots don't overlap.

Failure mode: If both agents are from similar training distributions, they make the same mistakes. True independence requires different models.

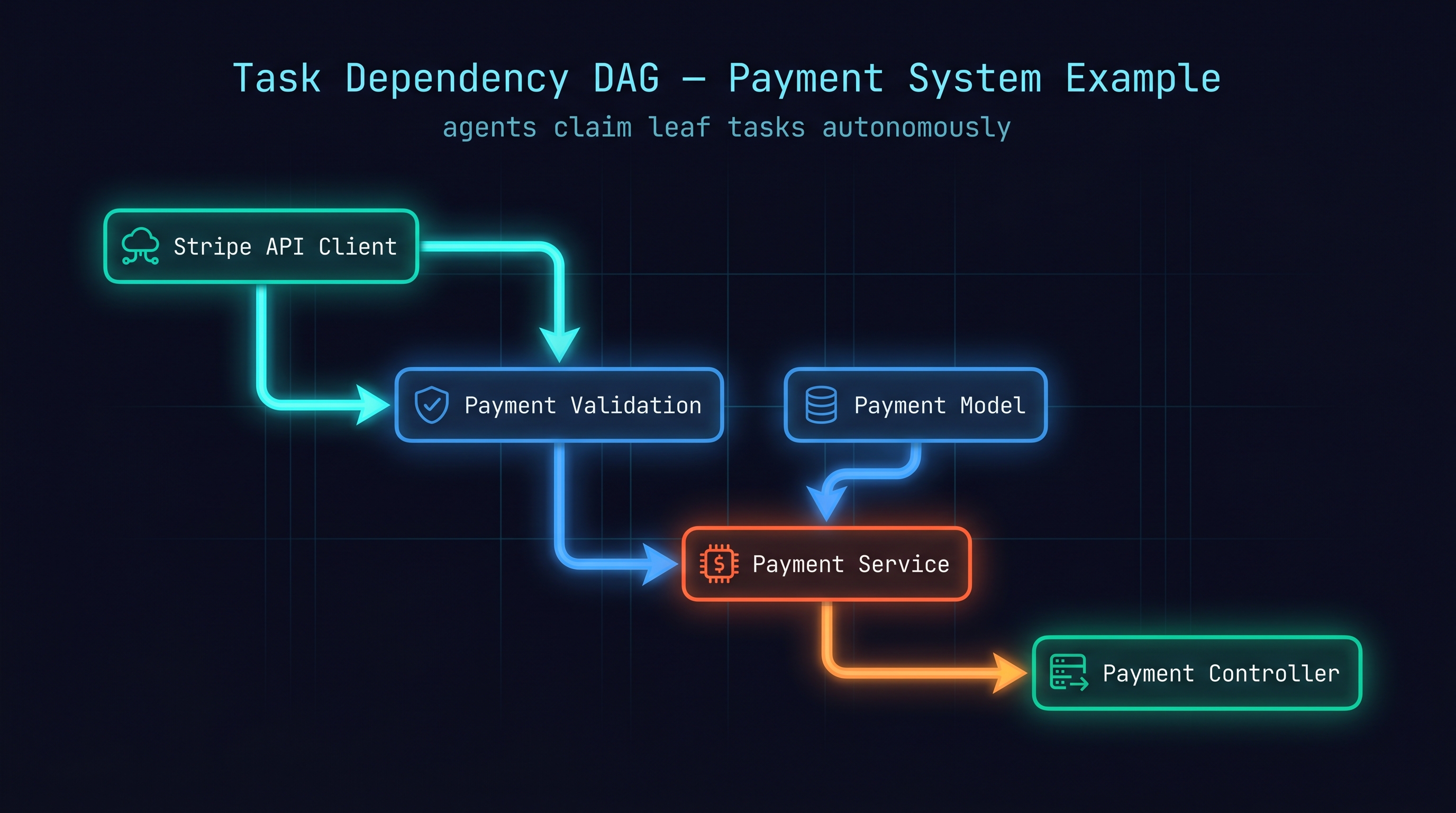

Pattern C: Dependency Graph Execution

Decompose a complex feature into a DAG and execute branches in parallel.

When: Multiple independent modules to develop simultaneously.

Here's a "payment system implementation" DAG:

[payment model] → [payment service] → [payment controller]

↗

[payment validation] ───────────────

↑

[Stripe API client]

In Claude Code, declare this with TaskCreate + addBlockedBy [Anthropic, 2026]. Agents autonomously claim leaf tasks (those with no pending dependencies) and execute them.

In Codex, an orchestrator agent manages the sequence and assigns tasks to subagents.

Failure mode: Over-decomposing creates orchestration overhead that exceeds parallelism gains. Principle: only parallelize tasks with no dependencies on each other.

Pattern D: Incremental Construction

Build in small verified steps rather than one large implementation.

When: Complex systems, high-risk changes.

The most important lesson from a Korean developer's multi-agent retrospective [brunch), 2026]: "Iterative beats upfront." Having an agent plan the full architecture first, then implement, is less safe than: minimal skeleton → feature 1 → feature 2, verifying at each step.

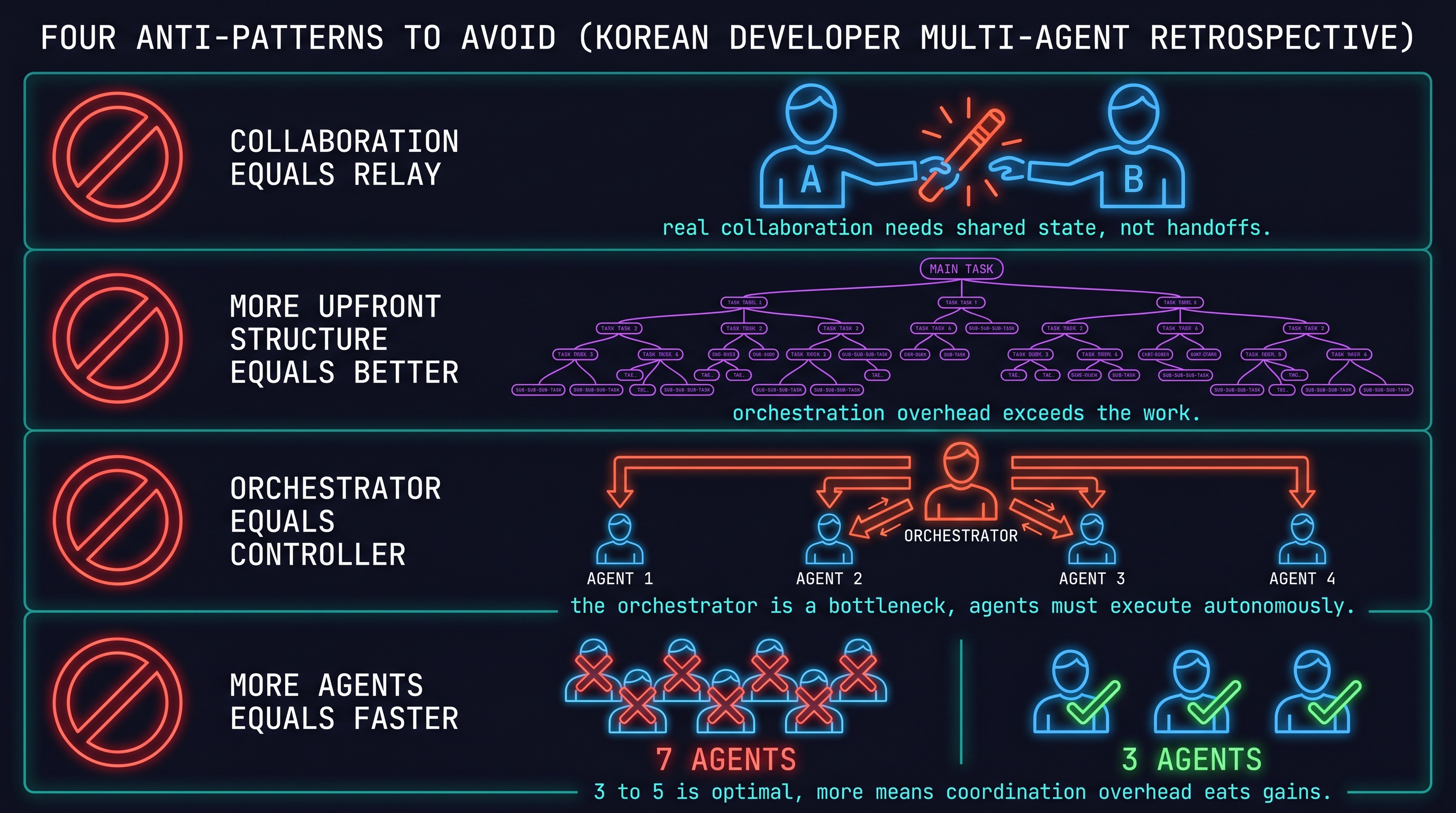

6.4 Four Anti-Patterns (What to Avoid)

From the Korean developer multi-agent retrospective [brunch), 2026]:

- "Collaboration = relay": Agent A completes and hands off to Agent B. This isn't collaboration — agents need to see shared state and work simultaneously.

- "More upfront structure is better": Over-decomposing tasks and declaring complex dependencies creates orchestration overhead that dominates the work itself.

- "Orchestrator = controller": When the orchestrator tries to control everything, it becomes a bottleneck. The orchestrator declares dependencies; teammates execute autonomously.

- "More agents = faster results": Anthropic's experiments found 3-5 optimal [Anthropic, 2026]. More agents means coordination overhead eats the gains.

6.5 What MorphLLM's Benchmark Reveals

MorphLLM showed Claude Code and Codex outputs to blind reviewers who didn't know the source [MorphLLM, 2026]: reviewers preferred Claude Code's output 67:25. But on Terminal-Bench, Codex won.

This is exactly why "verifiable" vibe coding matters: benchmark scores diverge from actual preference. An agent's self-evaluation differs from independent verification. Multi-agent separation is the mechanism for closing that gap.

References

- Anthropic, "Agent Teams," 2026. [Anthropic, 2026]

- Anthropic, "Subagents," 2026. [Anthropic, 2026]

- Mejba Ahmed, "I ran Codex inside Claude Code," 2026. [Ahmed, 2026]

- B327Roy, "Multi-agent retrospective (Korean)," 2026. [brunch), 2026]

- MorphLLM, "Codex vs Claude Code Benchmark," 2026. [MorphLLM, 2026]

- OpenAI, "Codex subagents," 2026. [OpenAI, 2026]

- Korean Developer, "바이브 코딩 플레이리스트," 2026. [Korean Dev Blog, 2026]

- Intuition, "Codex as superapp — multi-agent guide," 2026. [IntuitionLabs, 2026]

- Jagtap, "Codex AGENTS.md auto-optimization," 2026. [Jagtap, 2026]

- GitHub, "Codex subagents issue #11354 — /review re-enables subagents despite config," 2026. [contributors, 2026]