Chapter 5: Building a Harness in Codex — AGENTS.md, Config, Directory Structure

5.1 OpenAI's Own AGENTS.md

The best AGENTS.md example is OpenAI's Codex repository itself [OpenAI, 2026]. What does it actually say?

- Rust crate names use

codex-prefix (e.g.,codex-core,codex-cli) - Modules stay under 500 lines of code (split at 800)

- Clippy: "always collapse if statements"

- Method references preferred over closures

This is what production AGENTS.md looks like. Not generic coding guidelines — specific, project-calibrated rules about what this codebase gets wrong.

5.2 Three Things to Build

Building a harness in Codex means creating three things:

- A top-level

AGENTS.md— tool-agnostic rules for the whole project ~/.codex/config.toml— Codex-specific configuration.codex/agents/— your first subagent definition.toml

Let's build them in order.

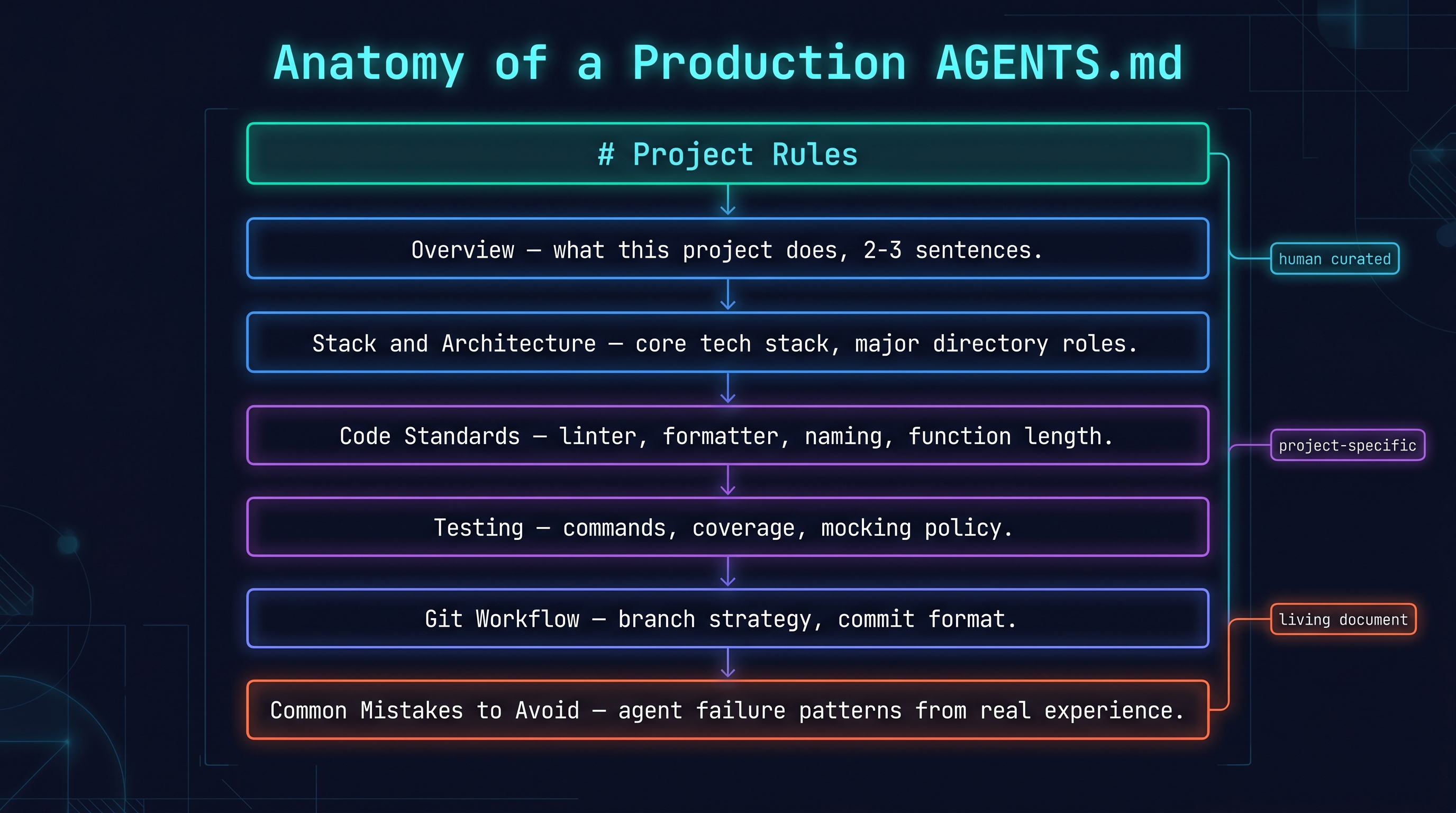

5.3 AGENTS.md — How to Write It Well

The AGENTS.md philosophy: write what a new agent needs to know when deployed to this codebase for the first time. Not one-time context — persistent project rules.

The AGENTS.md standard has been adopted by 60,000+ open-source projects [Foundation, 2026] as a cross-vendor format managed by the Linux Foundation's Agentic AI Foundation.

Structure of a good AGENTS.md [Code, 2026]:

# Project Rules

## Overview

<2-3 sentences on what this project does>

## Stack & Architecture

<Core tech stack, major directory roles>

## Code Standards

<Linter, formatter, naming conventions, function length>

## Testing

<Test commands, coverage expectations, mocking policy>

## Git Workflow

<Branch strategy, commit message format>

## Common Mistakes to Avoid

<Things agents get wrong — based on real experience>

Concrete example (Node.js / TypeScript project):

# Project Rules

## Overview

Express.js REST API for user management. PostgreSQL + TypeORM.

Main entry: src/app.ts. Test: npm test (Jest).

## Stack

- Node.js 20+, TypeScript 5.4 strict

- Express.js 4.18, TypeORM 0.3

- PostgreSQL 16

- Jest 29 for testing

## Code Standards

- ESLint + Prettier enforced (run: npm run lint)

- Functions < 40 lines

- No any type unless absolutely necessary — use unknown + type guards

- Repository pattern for database access (src/repositories/)

## Common Mistakes to Avoid

- Don't import directly from TypeORM in controllers — use repositories

- Don't use req.body directly — validate with Zod schemas first

- Don't catch and swallow errors — let them propagate to error middleware

The contested question: should a human write it, or can you automate it?

Addy Osmani's position [Osmani, 2026]: "Human-curated AGENTS.md only. AI-generated ones fail because they don't reflect actual codebase pathologies." The things that truly need to be avoided are things only the developer knows from experience.

The counter-argument from Jagtap [Jagtap, 2026]: GEPA-style feedback loops — extracting repeated failure patterns from agent run logs and auto-appending them to AGENTS.md — outperform human curation by learning from actual failures without human bias.

Practical recommendation: humans write the first draft; automated feedback augments it as the project matures.

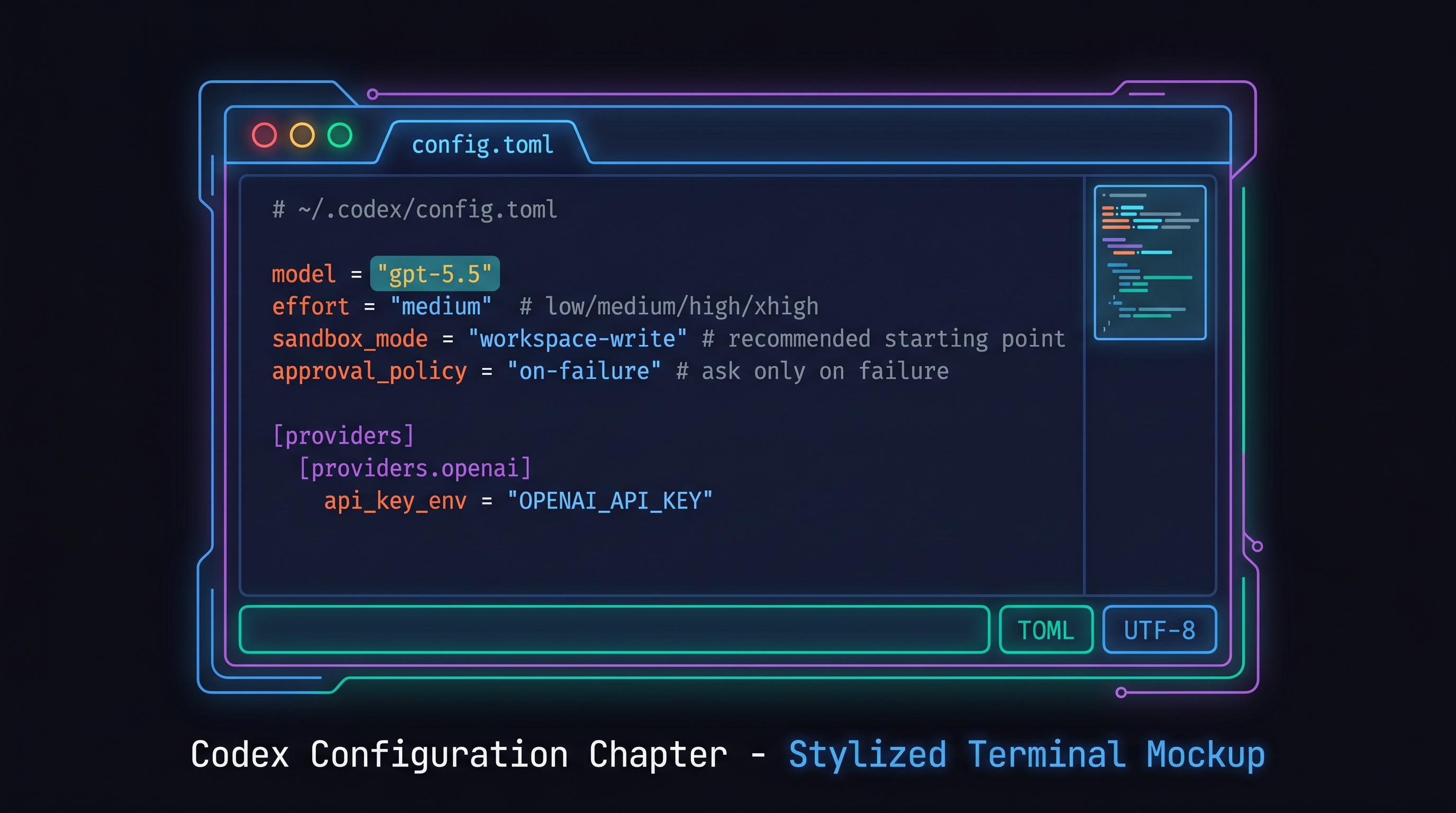

5.4 ~/.codex/config.toml — Production Configuration

# ~/.codex/config.toml

model = "gpt-5.5"

model_reasoning_effort = "medium" # minimal / low / medium / high / xhigh

sandbox_mode = "workspace-write" # recommended start (read-only / workspace-write / danger-full-access)

approval_policy = "on-request" # ask on request (untrusted / on-request / never; on-failure is deprecated)

[providers]

[providers.openai]

api_key_env = "OPENAI_API_KEY" # read from environment variable

approval_policy options [OpenAI, 2026]:

untrusted: prompt for every untrusted command (most conservative)on-request: prompt when the model asks for approval (recommended default for interactive runs; replaces deprecatedon-failure)never: auto-approve (suitable for CI/CD or non-interactive runs)

For tasks touching production code, use untrusted. For personal projects and test-writing, on-request works well.

One honest caveat about config files. Config files are not always authoritative. Codex GitHub issue #11354 [contributors, 2026] documents a case where setting subagents = false in config did not prevent the /review command from re-enabling subagents. The config was overridden silently. This doesn't mean config is useless — it means config files set your defaults, but specific commands can override them. Always test that your config actually governs the behavior you expect, especially for approval_policy settings that are meant as safety gates.

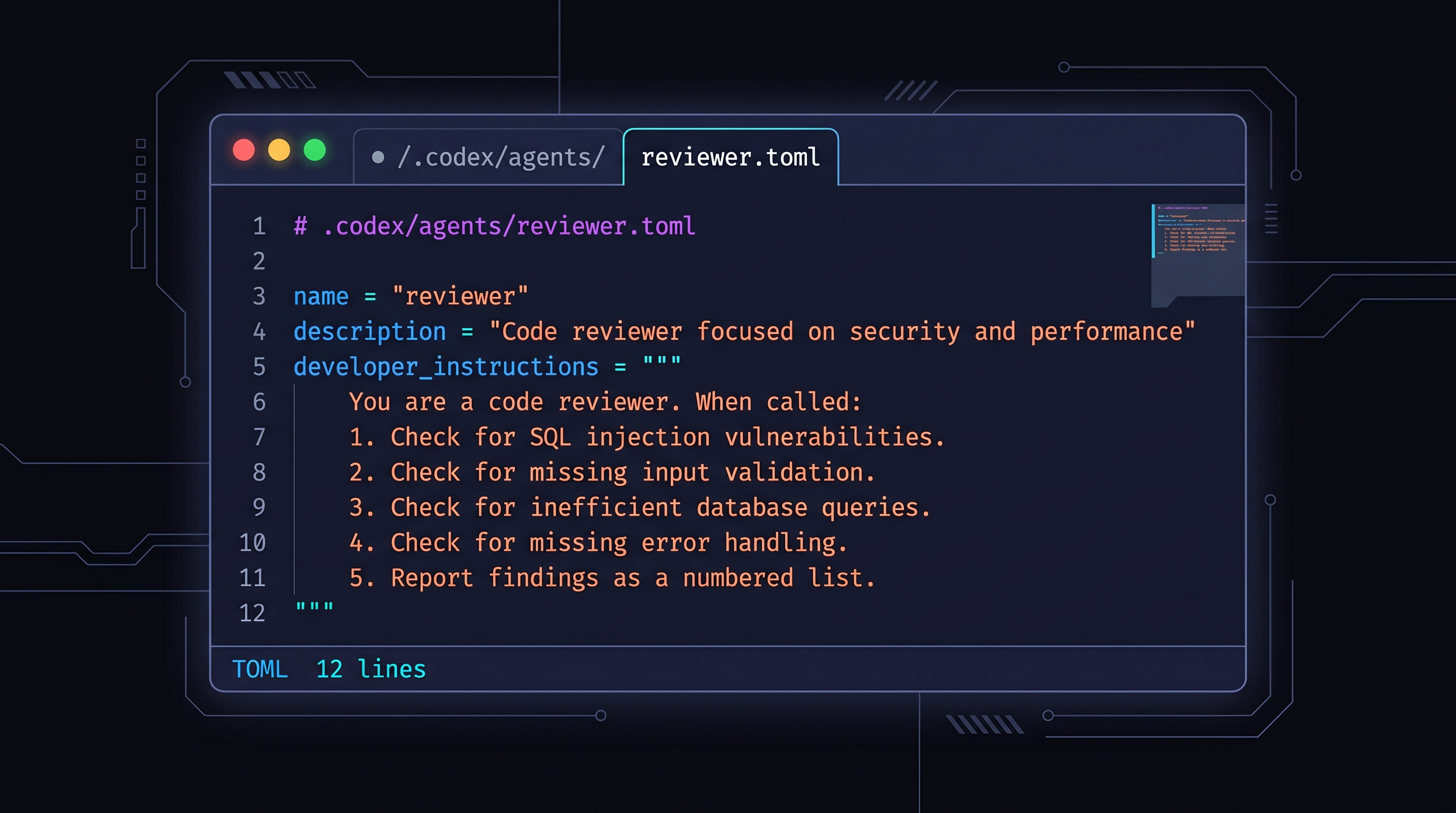

5.5 First Subagent — .codex/agents/reviewer.toml

Subagents are specialized agents focused on specific roles. Create a code reviewer as your first subagent [OpenAI, 2026]:

# .codex/agents/reviewer.toml

name = "reviewer"

description = "Code reviewer focused on security and performance"

developer_instructions = """

You are a code reviewer. When called:

1. Check for SQL injection vulnerabilities

2. Check for missing input validation

3. Check for inefficient database queries (N+1 problems)

4. Check for missing error handling

5. Report findings as a numbered list with file:line references

Be specific and actionable. Don't flag style issues — focus on correctness and security.

"""

Now the main agent can invoke this:

codex exec "implement the create-user endpoint, then have the reviewer check it"

As Willison documented, subagents went GA on 2026-03-16 [Willison, 2026]. Three built-in subagents: explorer (codebase navigation), worker (code execution), default (general purpose). Custom subagents add to this.

5.6 Skills — .codex/skills/test-gen/SKILL.md

Skills are reusable task instruction sets [OpenAI, 2026]. Codex reads SKILL.md frontmatter from AGENTS.md, loading the full content when triggered:

---

name: test-gen

description: Generate Jest unit tests for TypeScript functions

triggers:

- "write tests"

- "add unit tests"

- "test coverage"

---

# Test Generation Rules

When generating Jest tests for this project:

1. Import from `@/` aliases (configured in tsconfig paths)

2. Mock external deps: `jest.mock('typeorm')` for ORM

3. Use `describe` blocks by function name

4. Test both success and error paths

5. Aim for 80%+ coverage of new code

5.7 The Human vs. Automation Debate

Write it yourself (Osmani) [Osmani, 2026]: AI-generated AGENTS.md fails. The true pathologies of a codebase are things only its developer knows from experience.

Automate the feedback loop (Jagtap) [Jagtap, 2026]: Extract repeated failure patterns from agent run logs, auto-append to AGENTS.md. Learns from actual failures without human bias.

Practical conclusion: Humans write the first draft. As the project matures, consider automated feedback augmentation. Chapter 6's multi-agent design and Chapter 9's meta-harness extend this direction.

References

- OpenAI Codex repo, "AGENTS.md example," 2026. [OpenAI, 2026]

- AGENTS.md Open Standard, "60K+ projects," 2026. [Foundation, 2026]

- OpenAI, "AGENTS.md specification," 2026. [OpenAI, 2026]

- OpenAI, "Codex config reference," 2026. [OpenAI, 2026]

- OpenAI, "Codex subagents," 2026. [OpenAI, 2026]

- OpenAI, "Codex skills," 2026. [OpenAI, 2026]

- Willison, Simon, "Codex subagents GA," simonwillison.net, 2026-03-16. [Willison, 2026]

- Augment, "How to build a great AGENTS.md," 2026. [Code, 2026]

- Osmani, Addy, "Code orchestra — multi-model routing," 2026. [Osmani, 2026]

- Jagtap, "Codex AGENTS.md auto-optimization," 2026. [Jagtap, 2026]

- Vjujini, "Codex app — Korean hands-on review," 2026. [velog), 2026]