Chapter 4: Harness Engineering at a Glance — Concepts and Core Patterns

4.1 The Agent Framework Paradox

Lance Martin (Anthropic) said it plainly: "Agent frameworks encode assumptions about Claude's limitations, but as the model evolves these assumptions become bottlenecks" [Martin and Anthropic, 2026].

This is the harness engineering paradox. The code you wrote to compensate for model limitations starts limiting the model as it improves. Good harness engineering evolves with the model.

But the more fundamental question: What is a harness, actually?

4.2 What a Harness Actually Is

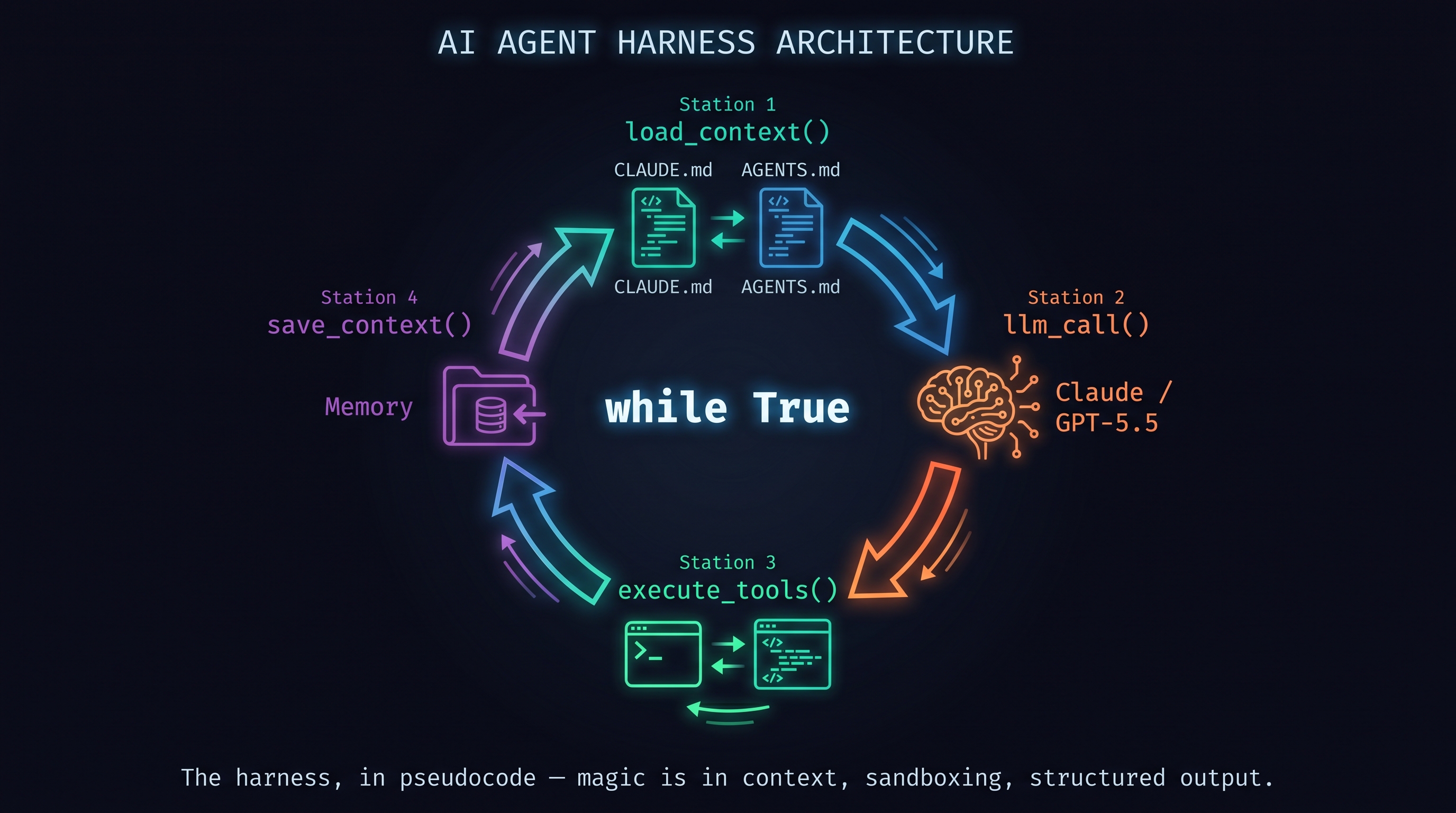

After analyzing both Claude Code and Codex, Alex Fulton concluded [Fulton, 2026]: "Both tools are basically a while True loop with tools attached. The magic is in context management, sandboxing, and structured output — not the LLM call itself."

# The harness, in pseudocode

while True:

context = load_context() # read CLAUDE.md / AGENTS.md

response = llm_call(context + user_input)

result = execute_tools(response.tool_calls)

save_context(result) # update memory

if response.is_done:

break

That's it. The three functions that create the difference:

- What

load_context()reads — the memory model - What

execute_tools()allows — the permissions model - What

save_context()stores — the persistence model

Harness engineering is designing these three functions well.

4.3 Three Patterns

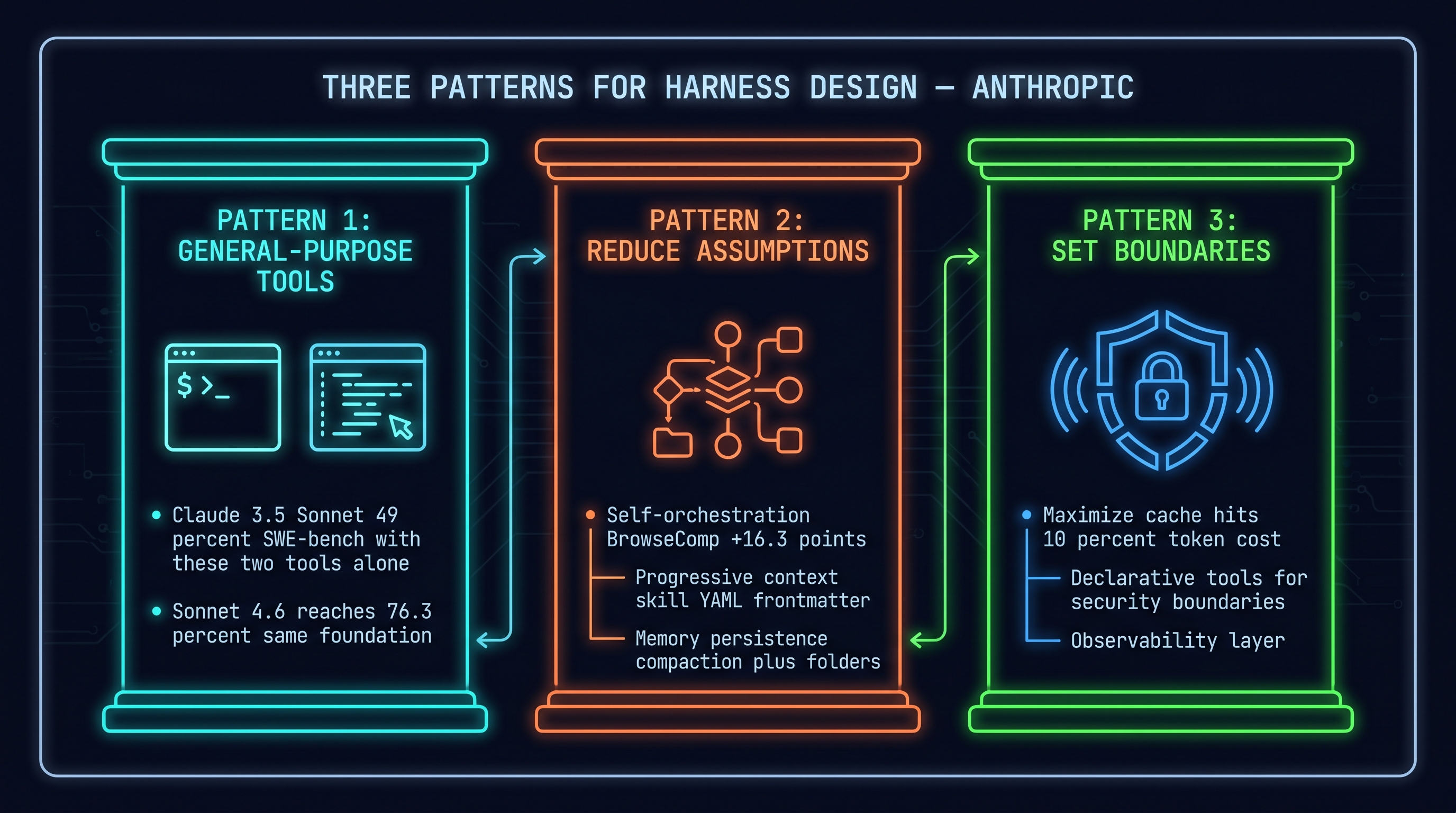

Anthropic's "Harnessing Claude's Intelligence" [Martin and Anthropic, 2026] organizes harness design into three patterns.

Pattern 1: Use What the Model Already Knows

Models deeply understand bash and text editors from training data. Composing higher-level capabilities on these familiar tools beats building specialized interfaces from scratch.

The evidence: Claude 3.5 Sonnet achieved 49% on SWE-bench Verified using only bash + text editor (late 2024 SOTA). Sonnet 4.6 reached 76.3% with the same foundation [Martin and Anthropic, 2026]. Programmatic tool calling, skills, and memory are all compositions of these two tools.

Pattern 2: Keep Asking "What Can I Stop Doing?"

Audit assumptions encoded in the harness. Remove structures that have become unnecessary. Three directions:

A. Self-orchestration: Instead of loading all tool results into context, give the model a code execution tool and let it chain tool calls itself. Opus 4.6 went from 45.3% → 61.6% on BrowseComp (+16.3%p) [Martin and Anthropic, 2026].

B. Progressive context: Pre-loading all task instructions depletes the attention budget. Use skill YAML frontmatter for brief overviews; the agent reads full content when needed. Subagents add +2.8% on BrowseComp for Opus 4.6.

C. Memory persistence: Long-running agents exceed a single context window. Two solutions:

- Compaction: Summarize past context. Opus 4.6: 84% on BrowseComp

- Memory folder: Write/read context to files. Sonnet 4.5's BrowseComp-Plus: 60.4% → 67.2%

Pattern 3: Set Boundaries Carefully

Models don't inherently know your app's security boundaries or UX surfaces. Set explicit boundaries:

A. Maximize cache hits: Prompt caching reduces cached token cost to 10% of base input tokens. Static content first, dynamic content last [Martin and Anthropic, 2026].

B. Declarative tools: Create dedicated tools for actions requiring security boundaries and hard-to-reverse operations.

C. Observability: Structured logging, tracing, and replay layers.

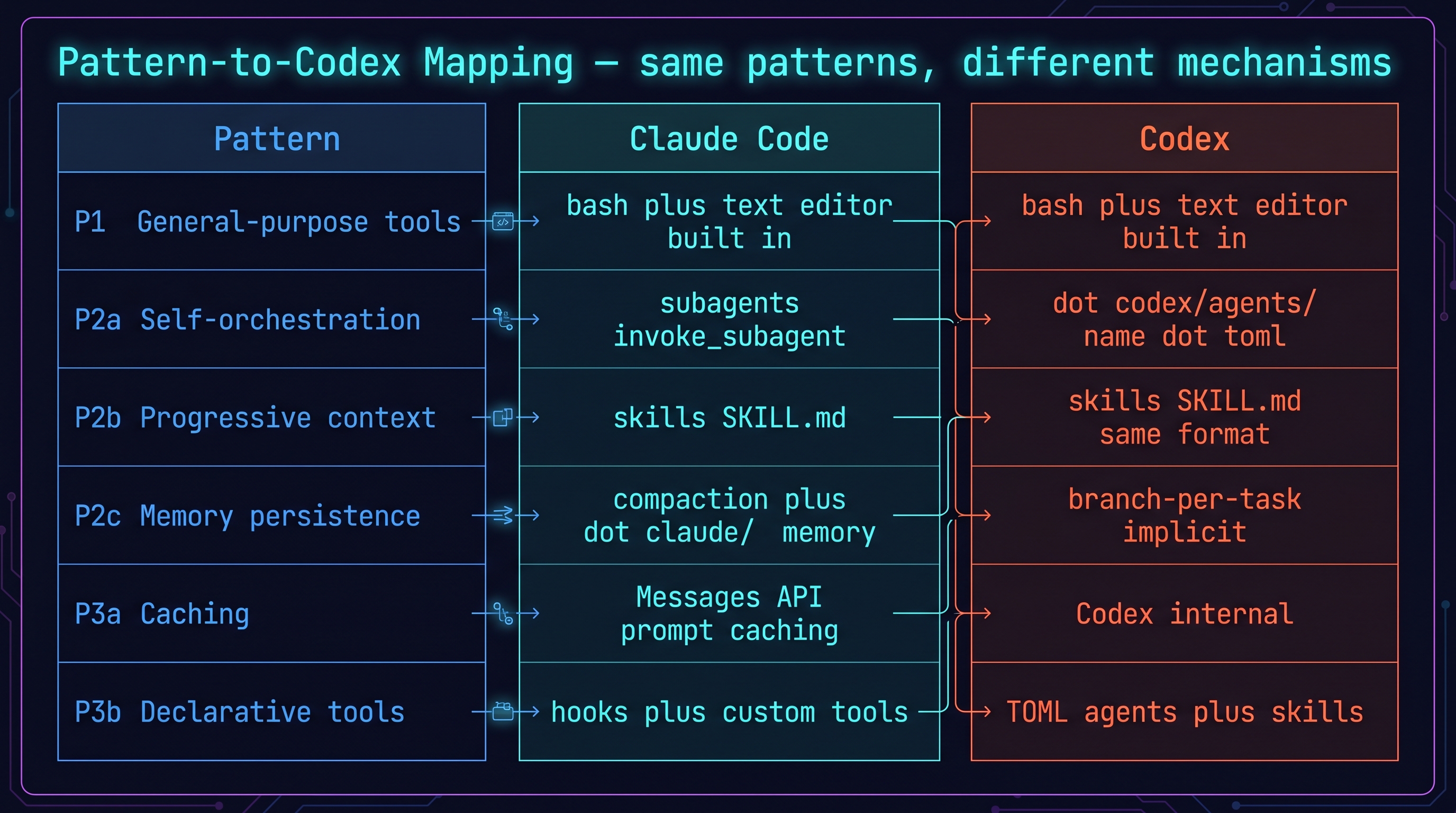

4.4 Pattern-to-Codex Mapping

| Pattern | Claude Code | Codex |

|---|---|---|

| P1: General-purpose tools | bash + text editor (built-in) | bash + text editor (built-in) |

| P2a: Self-orchestration | subagents (invoke_subagent) |

.codex/agents/ |

| P2b: Progressive context | skills (SKILL.md) | skills (SKILL.md, same format) |

| P2c: Memory persistence | compaction + .claude/memory/ |

branch-per-task (implicit) |

| P3a: Caching | Messages API prompt caching | (Codex-internal) |

| P3b: Declarative tools | hooks + custom tools | TOML agents + skills |

These three patterns are tool-agnostic. They apply to Codex, Claude Code, and any other LLM tool. Chapter 5 implements them concretely in Codex.

4.5 The Counter-Argument

Lance Martin's point is valid. But it doesn't mean "don't build a harness." It means: design your harness to be evolvable.

From Okhlopkov's 4-month Claude Code retrospective [Okhlopkov, 2026]: "I spent the first month learning the tool. The next three months optimizing the harness." Refactoring the harness like you refactor code is the key. If you haven't revisited your harness since the last model update, you're probably compensating for limitations that no longer exist.

References

- Anthropic, "Harnessing Claude's Intelligence: Three Patterns for Agent Harness Design," 2026. [Martin and Anthropic, 2026]

- Anthropic, "Claude Code: Best practices for agentic coding," 2026. [Anthropic, 2026]

- Fulton, Alex, "Inside the agent harness," 2026. [Fulton, 2026]

- Promptshelf, "10 Claude Code hook examples," 2026. [Shelf, 2026]

- Okhlopkov, "Claude Code setup — 4-month retrospective," 2026. [Okhlopkov, 2026]

- Korean Developer, "하네스 엔지니어링 40분 정복," 2026. [Korean Dev Blog, 2026]

- HesReallyHim, "Awesome Claude Code — community catalog," 2026. [GitHub, 2026]