Chapter 2: Two Tools, Compared — Philosophy and Interface of Claude Code and Codex

2.1 Same Problem, Two Answers

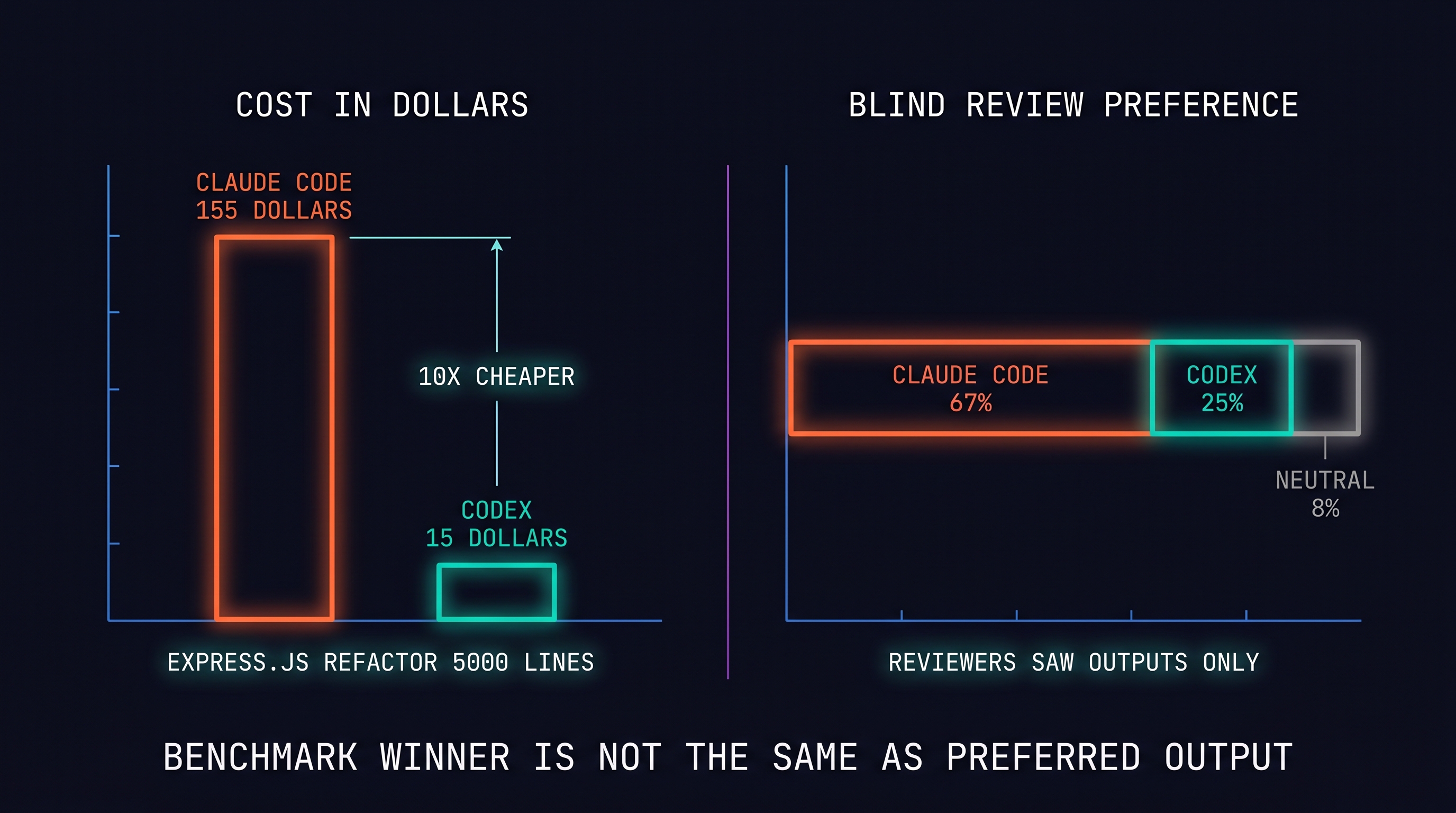

Start with a concrete task: refactor a 5,000-line Express.js service. Run it through both tools. What happens?

Two widely cited numbers from the developer community [contributor, 2026]:

- Cost: An Express.js refactor case ran $155 on Claude Code vs $15 on Codex — Codex is roughly 10x cheaper (cost derived from token consumption).

- Blind quality review: in a blind output-only comparison, reviewers preferred Claude Code 67% vs Codex 25% (8% tied; 500+ developer Reddit survey).

Claude Code costs more and produces better output. Codex costs less and produces slightly weaker output. But these numbers capture only one of seven dimensions — cost/quality tradeoff. The other six dimensions tell a more complex story.

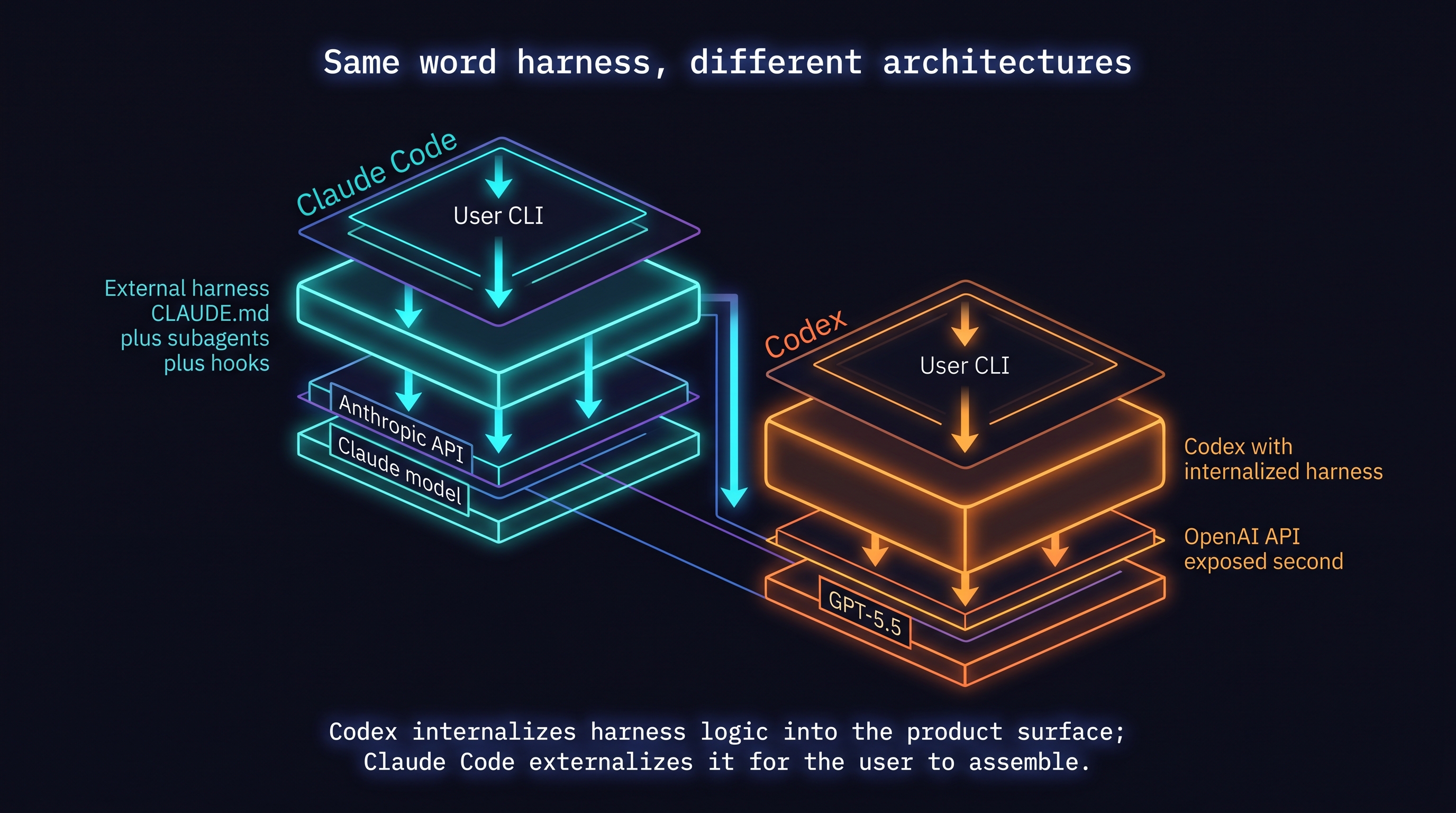

The delivery channel distinction: Anthropic designed Claude Code as an external harness layer on top of the model API [Anthropic, 2026]. OpenAI shipped GPT-5.5 into Codex before the API opened [Willison, 2026]. Same word ("harness"), different architectures — Codex internalizes harness logic into the product surface, Claude Code externalizes it for the user to assemble.

2.2 Seven Dimensions

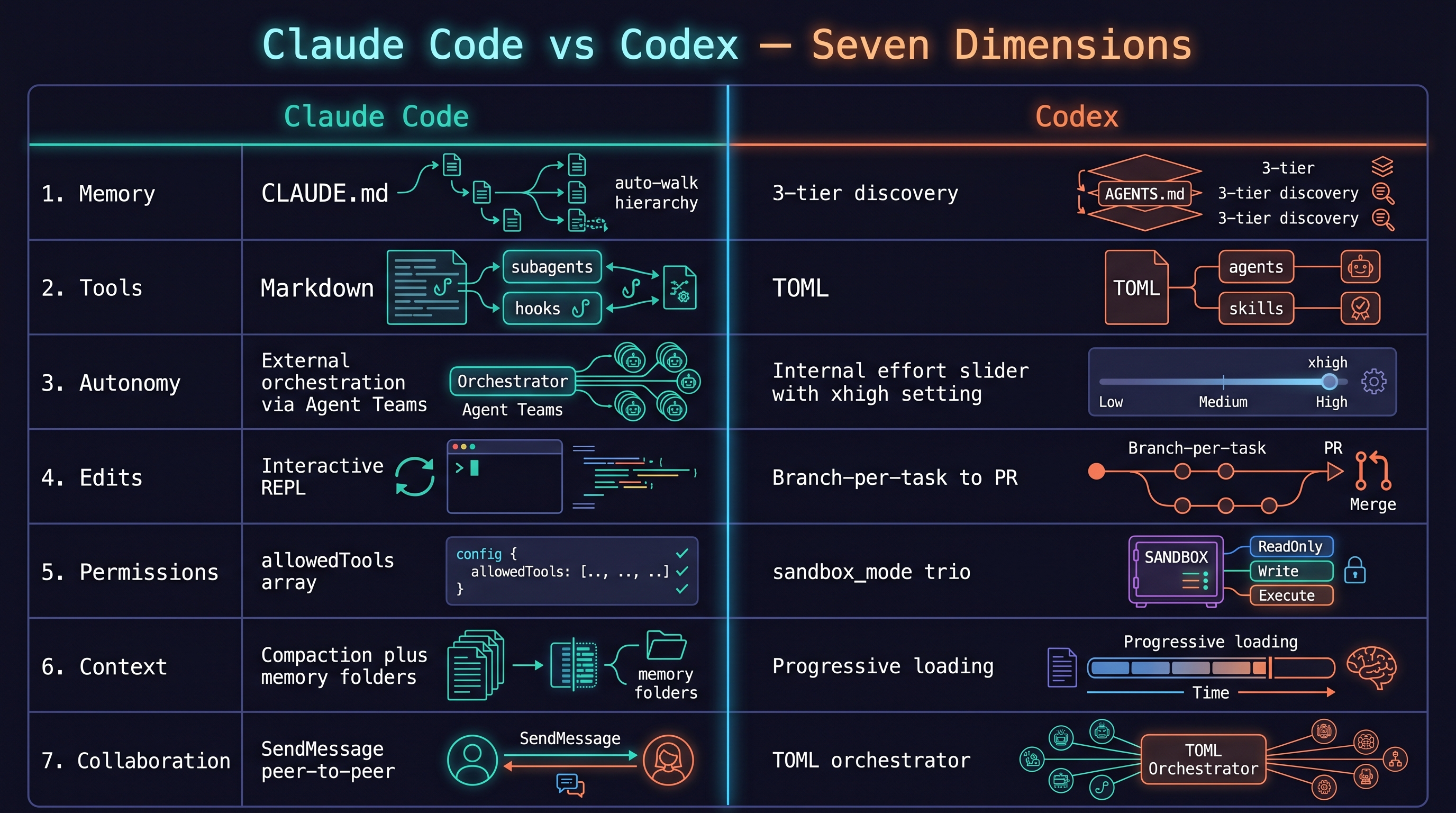

Dimension 1: Memory Model

Claude Code: CLAUDE.md hierarchy with auto-walk. Reads ~/.claude/CLAUDE.md (global) → project root CLAUDE.md → subdirectory .claude/CLAUDE.md automatically. Agent-driven memory persistence via .claude/memory/ folder [Anthropic, 2026].

Codex: AGENTS.md 3-tier discovery with override priority. Walks from current directory up toward root for AGENTS.md files; global config in ~/.codex/; subagent definitions in .codex/agents/ [OpenAI, 2026]. Override priority: more specific path wins.

Tradeoff: Claude's auto-walk is convenient but hard to audit. Codex's explicit 3-tier is more predictable but requires you to find files manually.

Dimension 2: Tool Model

Claude Code: subagents as functions. Define agents in .claude/agents/ (markdown), invoke via invoke_subagent [Anthropic, 2026]. Hooks in .claude/hooks/ inject logic before/after tool execution.

Codex: agents as TOML files. Write name, description, developer_instructions in .codex/agents/ — Codex auto-discovers them [OpenAI, 2026]. Skills use SKILL.md frontmatter [OpenAI, 2026].

Tradeoff: Claude's markdown agents are human-friendly but loosely schemed. Codex's TOML is structured and portable — AGENTS.md is adopted by 60,000+ open-source projects as a cross-vendor standard [Foundation, 2026].

Dimension 3: Autonomy Model

Claude Code: harness orchestrates autonomy externally. Agent Teams [Anthropic, 2026] lets multiple agents collaborate via shared task lists; SendMessage enables peer-to-peer agent communication. Autonomy depth is determined by the user's harness design.

Codex: autonomy internalized via reasoning effort. One line in config.toml — model_reasoning_model_reasoning_effort = "xhigh" — adjusts the model's reasoning depth [OpenAI, 2026]. In GPT-5.5, xhigh effort uses ~240x more reasoning tokens than none [Willison, 2026].

Tradeoff: Claude's external orchestration gives fine-grained control but adds complexity. Codex's effort slider is simple but opaque — you can't observe the reasoning process directly.

Dimension 4: Edit Model

Claude Code: interactive REPL. Each terminal session maintains state; changes land in the filesystem immediately. /undo reverts the last change.

Codex: branch-per-task. Each task runs on an independent branch, completing as a PR [Proser, 2026]. Multiple tasks can run in parallel on separate branches.

Tradeoff: Claude's REPL is fast for exploration. Codex's branch-per-task is safer and trivially parallelizable — queue multiple tasks, merge results.

Dimension 5: Permissions Model

Claude Code: auto-mode + allowlists. Tools can prompt for user confirmation or auto-approve. Fine-grained per-tool control via allowedTools array in .claude/settings.json [Anthropic, 2026].

Codex: sandbox_mode trio [OpenAI, 2026]:

read-only: read-only access (most conservative)workspace-write: writes allowed in current workspace (recommended default)danger-full-access: unrestricted system access (use with caution)

approval_policy sets human-in-the-loop gates (untrusted / on-request / never).

Tradeoff: Claude's per-tool granularity vs Codex's sandbox-level selection. Codex's simplicity is friendlier for operational safety.

Dimension 6: Context Management

Claude Code: compaction + memory folders. When context grows long, Claude summarizes past content (compaction) to recycle the context window — Opus 4.6 achieved 84% on BrowseComp with compaction [Martin and Anthropic, 2026]. The memory folder pattern lets agents write and read context files.

Codex: progressive context loading. Skill YAML frontmatter in AGENTS.md loads brief overviews; the agent reads full content when needed [OpenAI, 2026]. Branch isolation means each task also starts with clean context.

Tradeoff: Claude's compaction excels for long sessions. Codex's progressive loading keeps initial context lean.

Dimension 7: Collaboration Model

Claude Code: Agent Teams + SendMessage [Anthropic, 2026]. TeamCreate creates a team; TaskCreate with addBlockedBy/addBlocks declares a dependency graph. Agents communicate directly via SendMessage.

Codex: TOML subagents + skills [OpenAI, 2026]. Define each agent as a TOML file in .codex/agents/; an orchestrator invokes them.

Tradeoff: Claude's Agent Teams are dynamic with rich runtime collaboration. Codex's TOML agents are static and declarative — better for tool composition than complex runtime messaging.

2.3 Why Every Dimension Is Asymmetric

The seven dimensions above aren't a feature checklist — they follow from a single architectural decision about where the harness lives.

Anthropic built Claude Code as a harness that lives in user-authored config: CLAUDE.md, .claude/agents/, .claude/hooks/, .claude/skills/. The model API is the foundation; the harness is the user's layer on top of it [Anthropic, 2026].

OpenAI shipped GPT-5.5 into Codex before the API opened [Willison, 2026]. The model and the harness arrived together, as a single product. The API was secondary. When the frontier model ships into the harness before the API, the harness has stopped being a wrapper — it has become the primary delivery channel.

That single decision cascades into every dimension above: memory is external vs. internal; collaboration is runtime-dynamic vs. TOML-declared; autonomy is user-orchestrated vs. effort-dialed; permissions are per-tool granular vs. sandbox-level. These aren't arbitrary differences — they each trace back to the same question: who owns the harness?

2.4 The Numbers

| Metric | Claude Code | Codex | Source |

|---|---|---|---|

| Express.js refactor cost | $155 | $15 | [contributor, 2026] |

| Blind review preference | 67% | 25% (tie 8%) | [contributor, 2026] |

| Terminal-Bench score | 65.4 | 77.3 | [MorphLLM, 2026] |

| SWE-bench Verified (Sonnet 4.6) | 79.6% | — | [Anthropic, 2026] |

| AGENTS.md adopting projects | N/A | 60,000+ | [Foundation, 2026] |

The takeaway: Codex wins on Terminal-Bench; Claude Code wins on blind review. Benchmark winner ≠ preferred output [MorphLLM, 2026]. DataCamp's comparison reaches the same conclusion [DataCamp, 2026]: "Claude for fast interactive coding; Codex for autonomous long-running tasks."

2.5 A Third Perspective

Blake Cros's analysis of the Chinese AI coding market [Crosley, 2026] frames the comparison as "interface philosophy": "Claude gives developers more control at the cost of more configuration. Codex takes control but provides a simpler starting point." The same tradeoff is legible across different markets.

2.6 Summary: Two Interfaces, Two Philosophies

Seven dimensions, one summary:

- Claude Code: user assembles the harness externally. More control, more configuration.

- Codex: harness is internalized into the product. Simpler start, more predictable autonomy.

Neither is "better." Chapter 3 covers your first Codex session. Starting in Chapter 4, you'll learn harness engineering patterns that work in both tools. These seven dimensions are the coordinate system for that learning.

References

- MorphLLM, "Codex vs Claude Code Benchmark," 2026. [MorphLLM, 2026]

- Anthropic, "Claude Code: Best practices for agentic coding," 2026. [Anthropic, 2026]

- Anthropic, "Claude memory documentation," 2026. [Anthropic, 2026]

- Anthropic, "Subagents," 2026. [Anthropic, 2026]

- Anthropic, "Agent Teams," 2026. [Anthropic, 2026]

- Anthropic, "Harnessing Claude's Intelligence," 2026. [Martin and Anthropic, 2026]

- OpenAI, "AGENTS.md specification," 2026. [OpenAI, 2026]

- OpenAI, "Codex config reference," 2026. [OpenAI, 2026]

- OpenAI, "Codex subagents," 2026. [OpenAI, 2026]

- OpenAI, "Codex skills," 2026. [OpenAI, 2026]

- Willison, Simon, "GPT-5.5," simonwillison.net, 2026-04-23. [Willison, 2026]

- AGENTS.md Open Standard, "60K+ projects adoption," 2026. [Foundation, 2026]

- Anthropic, "Introducing Claude Sonnet 4.6," 2026-02-17. [Anthropic, 2026]

- DataCamp, "Codex vs Claude Code," 2026. [DataCamp, 2026]

- Blake Cros, "China AI coding market analysis," 2026. [Crosley, 2026]

- Zack Proser, "Codex daily-use review," 2026. [Proser, 2026]

- dev.to, "Claude Code vs Codex 2026 — What 500+ Reddit Developers Really Think," 2026. [contributor, 2026]